Developers in the Claude Code ecosystem already figured this out.

PRDs as execution artifacts. Stateless loops that run for hours. Agents that read state from a file, do work, write state back, and keep going. Spec-driven development where the document drives everything and the conversation is just the mechanism.

It's a powerful pattern. And it's not limited to code.

I took the developer playbook and applied it to go-to-market operations. Content production, competitive intelligence, RevOps dashboards, sales enablement, campaign orchestration, prospect research.

135 PRDs in five months. All non-engineering work. Not all equal: some ran 2 iterations and shipped a quick fix, others ran 21 iterations across multiple days. The pattern scales across that range without modification.

Here's the pattern, how it works, and why it matters.

What a PRD Actually Is (When It's Not a Planning Doc)

Most people know PRDs as planning documents. You write one, hand it to someone, and never look at it again.

That's not what these are.

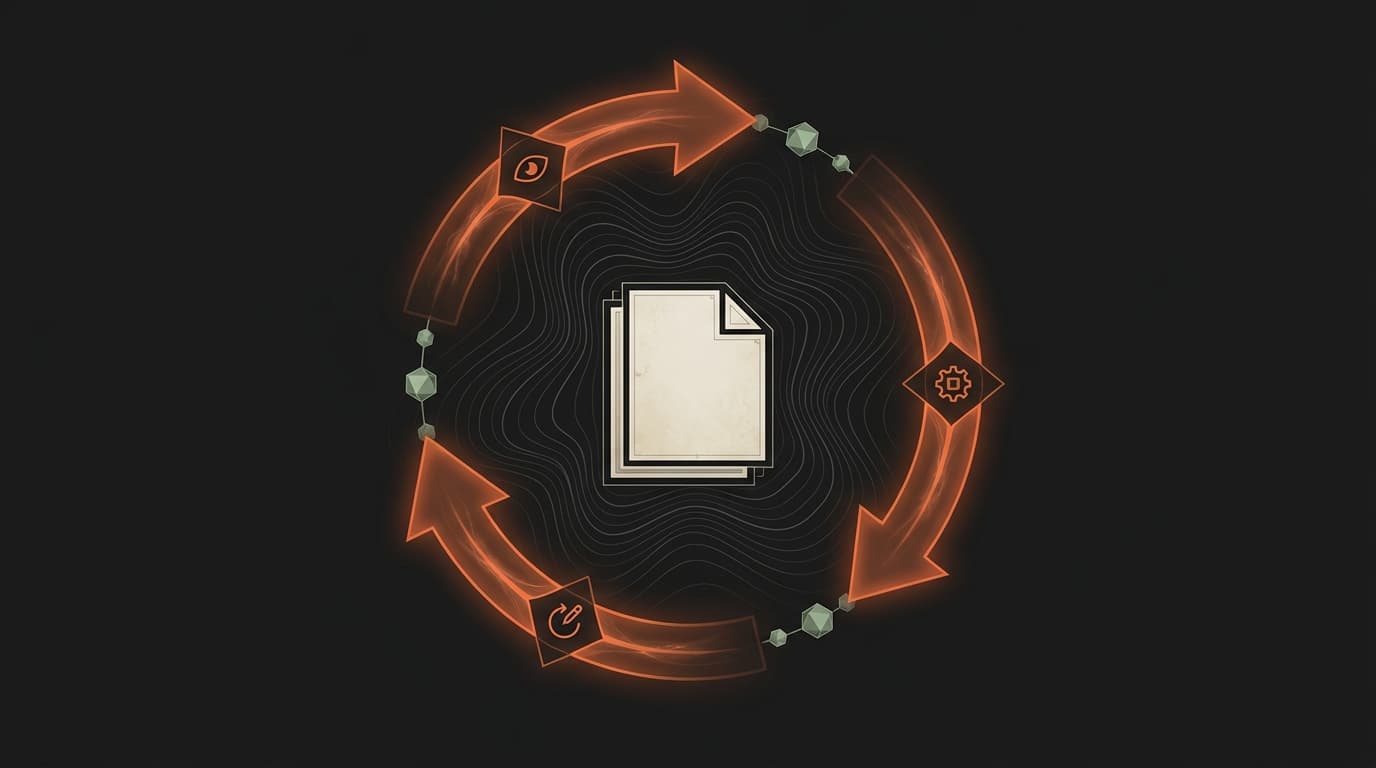

In this workflow, the PRD is the execution artifact. It's a living document the agent reads, acts on, and writes back to. Every phase, every decision, every failure, every completed step is in the file.

The anatomy:

Phases. Ordered steps with specific outcomes. Phase 1 might be research. Phase 2 might be drafting. Phase 3 might be quality validation. Each phase has actions and a verification gate.

Success criteria. Checkboxes. Testable statements of what "done" means. Not vibes. Not "looks good." Specific conditions the agent can evaluate. "SEO audit score >= 7/10." "All 4 pillar page briefs compiled." "Zero internal 404s on re-crawl."

State section. Updated every iteration. Current phase. Next action. Blockers. Failed attempts count. This is the section the agent reads first when it wakes up.

Iteration log. A running table of what happened. Iteration number, date, phase, action taken, result, notes. The complete execution history, right in the document.

Escape hatches. When to stop. Same action fails three times? Try an alternative. No phase progress in five iterations? Stop and ask a human. Total iterations hit the ceiling? Halt and report.

The PRD isn't a plan. It's a state machine on disk. The agent reads it, knows exactly where things stand, executes one step, and updates the file.

The Ralph Wiggum Loop

The execution engine is stupid simple. That's the point.

- Read state from the PRD file

- Execute ONE action

- Update state in the PRD file

- Output a completion signal

- Loop

I call it the Ralph Wiggum Loop. The name stuck and I've stopped trying to explain it.

The important design choice: one action per iteration. Not "do the next three things." One thing. Read state, do one thing, write state.

Why? Because one action per iteration means natural checkpoints. If something goes wrong, you've lost one action's worth of work, not an hour's worth. The iteration log shows exactly what happened and when. And the agent never runs away doing 15 things before anyone notices it went sideways at step 3.

After each action, the agent outputs one of three signals:

CONTINUE. More work to do. Loop back to step 1.

TASK_COMPLETE. All success criteria are met. Stop.

BLOCKED_NEED_HUMAN. Stuck. Can't proceed without a person. Here's exactly what the problem is.

The automated version uses a plugin that catches these signals. If the agent says CONTINUE, the plugin re-invokes with the same prompt. The agent reads the PRD file again (fresh context, no accumulated cruft), sees the updated state section, and picks up exactly where it left off.

You start it, walk away, come back to finished work.

Some PRDs have run 21 iterations across 12 phases over multiple days. Same execution quality at iteration 21 as iteration 1. Because the agent doesn't carry forward 21 iterations of conversation context. It reads a file.

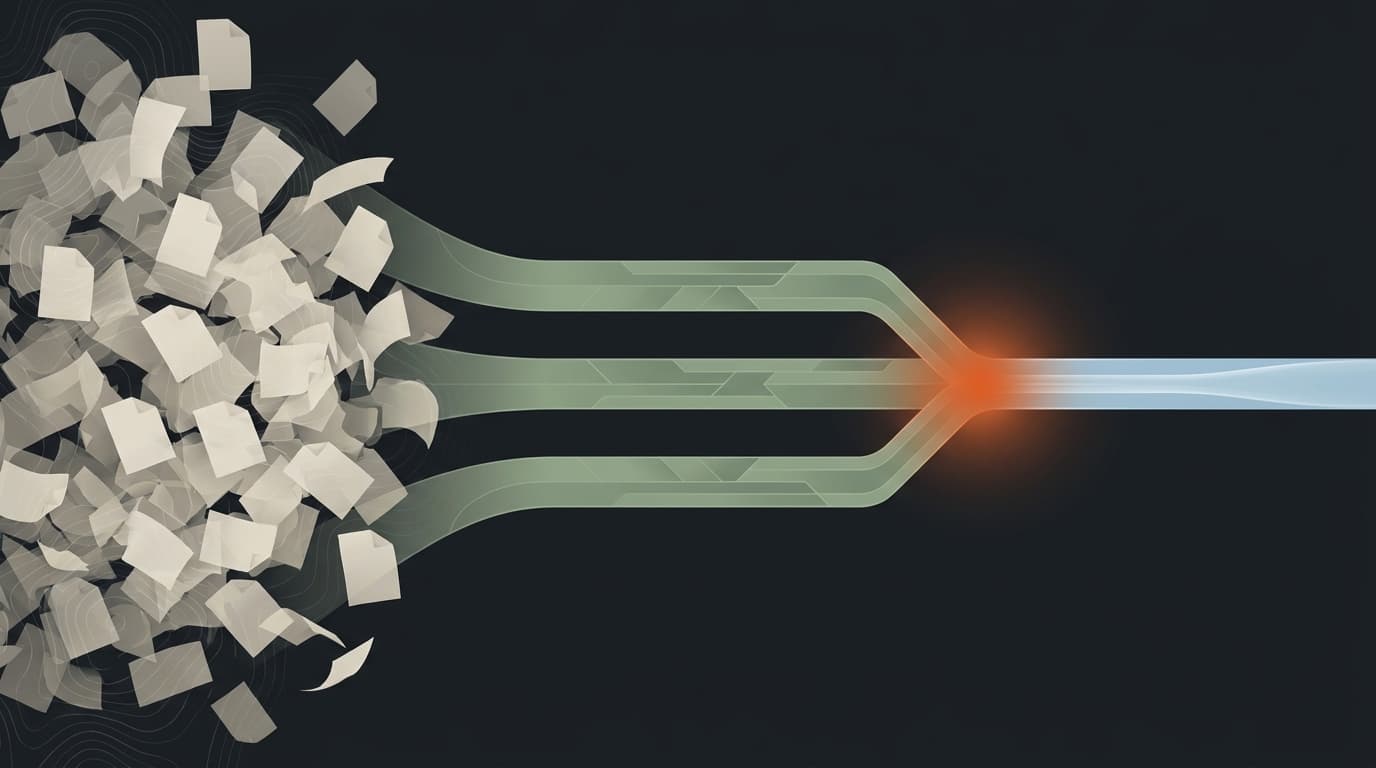

Why File-Based State Beats Conversation Memory

This is the core insight and it's embarrassingly simple.

Conversations are terrible state machines.

They degrade. Every message pushes earlier context further away. By minute 30, the agent's relationship with what you said at minute 5 is tenuous at best.

They compact. When the context window fills up, the system summarizes and discards. Key decisions evaporate. File paths disappear. That specific number you mentioned three times becomes "a metric the user referenced."

They die. Session ends, context is gone. Tomorrow you start over. "So, where were we?" is the most expensive sentence in AI-assisted work.

Files don't have any of these problems.

The PRD file doesn't degrade. The state section says "Phase 3, iteration 8, next action: run quality validation on the draft." Same clarity whether the agent reads it at iteration 1 or iteration 21.

The PRD file doesn't compact. Everything is written down. Decisions from Phase 1 are still there in Phase 5. The iteration log doesn't summarize itself.

The PRD file doesn't die. The agent crashes, the session times out, you close your laptop and go to dinner. Come back tomorrow. The agent reads the file. Knows exactly where things stand. No "remind me what we were doing."

The context window becomes working memory. Temporary. Disposable. The agent uses it to think about the current action.

The PRD file is persistent memory. Permanent. Complete. The agent reads it for everything else.

State belongs in files, not in conversations. That's the whole trick.

The Unlock: SEO Audit Into Page Build

Let me walk through a real example.

I needed pillar pages for a B2B SaaS website. SEO-optimized, buyer-validated, properly structured. The kind of pages that normally involve a content strategist, an SEO specialist, a writer, a designer, and a developer, coordinating across weeks.

Here's what actually happened.

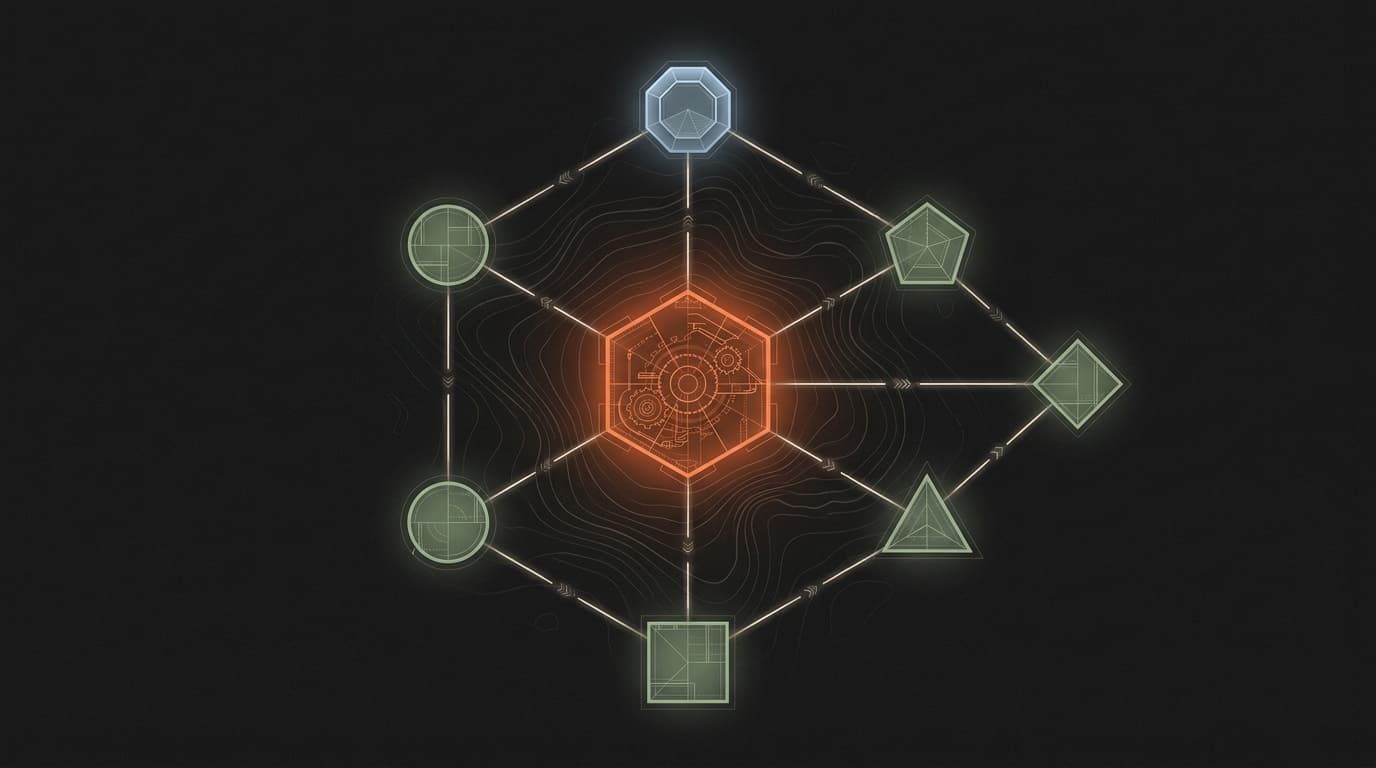

Step 1: SEO audit identifies the gaps. The audit skill runs a five-tier analysis of the current site. Crawlability, technical foundations, on-page optimization, content quality, authority signals. Plus AEO/GEO patterns, meaning how the content shows up in AI-generated answers, not just traditional search results.

The output: 1.23% CTR. 97% of traffic is branded. Only 28 non-branded clicks a month. The site needs pillar content for its core topics.

Step 2: The audit feeds into a page-build PRD. Not a brief. Not a ticket. A loop-compatible PRD with 10 phases and up to 60 iterations budgeted.

Phase 0: Intelligence gathering. The agent scans the knowledge graph: published content, market intel, customer call transcripts, competitive battlecards, keyword database. Before it writes a single word of copy, it has context from every relevant source.

Phase 1: Three research agents launch in parallel, each covering customer pain points, market positioning, and competitive landscape. Each writes a working note to disk. 50-800 lines of distilled context per agent.

Phase 2: Content strategy. Angle, headline, section structure mapped to specific components and SEO keyword targets.

Phase 3: Section copywriting. Evidence-backed, anti-slop score above 7.5.

Phase 4: Skeptical buyer validation. A separate agent reads the draft from the perspective of a cynical enterprise buyer and flags anything that wouldn't survive a procurement review.

Phase 5: Design decisions. Layout, components, responsive breakpoints.

Phase 6: Brief compilation. Everything assembled into a format the CMS assembly agent can consume. This is a human checkpoint. I review before assembly begins.

Phase 7: CMS assembly. The agent builds the actual page.

Phase 8: Quality gates. SEO re-audit. CRO audit scoring 7 conversion dimensions. Three-tier quality review.

Phase 9: Publish. Another human checkpoint.

Four of these pillar page PRDs generated in a single execution run. Then a batch assembly PRD that orchestrates all nine pillar pages through a seven-skill quality chain.

I kicked off the research phases before a meeting. Came back to compiled briefs with competitive positioning, customer evidence, SEO-optimized section structures, and buyer validation reports. From SEO gap to approved page brief, the agent did the work.

One of those PRDs went sideways. The skeptical buyer validation (Phase 4) flagged the entire angle as vendor copy disguised as practitioner content. The agent tried to fix it three times, hit the escape hatch, and escalated. I scrapped the angle and restarted from Phase 2 with a different positioning approach. The PRD pattern meant I lost two phases of work, not the whole project. The escape hatch earned its keep.

That's what a PRD-plus-loop unlocks. Not a clever prompt. A system.

PRDs + Loop vs. Plan Mode

If you use AI coding tools, you know plan mode. The agent pauses, thinks about the approach, writes out a plan, and asks for your approval before executing.

Plan mode is useful. It gives the agent space to reason about the task before committing to action. I use it regularly for scoping work.

But plan mode is still conversational. The plan lives in the session. When the session ends, the plan context goes with it.

PRD-plus-loop is different in a few important ways.

Persistence. The PRD is a file. It survives session breaks, compaction, crashes, and days between work sessions. Plan mode's plan is in the conversation. It survives until it doesn't.

Execution tracking. Every iteration is logged in the PRD. What was tried, what worked, what failed. Plan mode doesn't track execution history. It plans, then the agent executes in the normal conversational flow.

Recovery. When an agent picks up a PRD, it reads the file and knows the full state. When you resume after a plan mode session, the agent has a compressed summary of what happened. Not the same thing.

Scale. Plan mode works for tasks that complete in one session. PRDs-plus-loop work for tasks that run 21 iterations across multiple days. Different scale, different architecture needed.

They're not competitors. Plan mode is great for scoping a PRD. You use plan mode to think about the phases, define the success criteria, structure the escape hatches. Then the loop takes over for execution.

Plan mode is a feature. PRD-plus-loop is an architecture.

Permissions and Trust

Everything I've described works better when the agent can operate without asking permission for every file read and tool call.

For autonomous loops, this is the difference between an agent that runs uninterrupted for 15 iterations and an agent that stops 40 times to ask "can I read this file?" If the agent has to wait for a human to click approve on every action, you haven't built autonomous execution. You've built a patient assistant that needs you in the room.

There's a separate conversation about doing this safely. Scoping what the agent can access. Sandboxing execution environments. Review-after patterns instead of approve-before patterns. The trust model shifts from "ask permission for every action" to "operate within boundaries, I'll review the output."

If you want PRDs-plus-loop to actually work as autonomous execution, the agent needs room to operate without asking for permission at every step.

In practice, I allow unrestricted file reads and writes within the project directory, tool calls to approved skills, and git operations on feature branches. I block external API calls, anything touching production systems, and operations outside the project sandbox. The agent can research, draft, edit, and commit. It can't deploy, send emails, or modify infrastructure. The human checkpoints at brief compilation and publish are the safety net for the remaining judgment calls.

The Compounding Loop

After 33 completed PRDs, I extracted meta-intelligence into a reference index. What categories of work take how long. Where failures cluster. Which escape hatches actually fire.

The findings were instructive.

No PRD has ever hit its max iteration limit. Highest utilization: 50%. Median: 20%. Max iterations are psychological safety nets, not operational constraints.

Failures cluster into three categories: context management (too much source material in one window), sub-agent persistence (agents produce great analysis but fail to write it to disk), and artifact quality versus existence (automated checks confirm files exist, but manual review finds placeholder content).

Logic errors, bad plans, wrong approaches barely appear. PRDs are good at planning. They're bad at managing the physical constraints of execution.

That index now feeds back into the planning and execution skills automatically. Before scoping a new PRD, the agent reads empirical sizing data. Before starting execution, it reviews known failure modes. When stuck, it checks if the current pattern matches a documented resolution.

Every completed PRD makes the next one better. Not because the model improves. Because the system around it accumulates intelligence. Failure modes get cataloged. Execution baselines get calibrated against reality. Known stuck patterns get documented solutions.

The 135th PRD benefits from everything the first 134 taught the system.

Start Here

Pick one workflow you do repeatedly. A weekly report. A research brief. A campaign build.

Structure it as a PRD with phases and success criteria. Give the agent the read-execute-update loop. Let the document be the memory and the loop be the engine.

Then do it 134 more times and see what happens.

This pattern is part of the Knowledge OS architecture — the three-layer system that makes AI compound over time.