Google invented MapReduce to process petabytes of web data across thousands of machines.

I use it for GTM briefs.

Not because I process petabytes. Because a few thousand lines of source documents broke my AI the same way a single machine can't process a terabyte. The context window is a physical constraint, and when you exceed it, quality doesn't degrade gracefully. It falls off a cliff.

This is the single most important architectural pattern I've discovered building AI-native GTM operations. It prevented more quality failures than any other change I made. And nobody talks about it because context management sounds boring compared to "agentic workflows" and "autonomous AI."

The Incident

I needed a strategic analysis. The kind where you synthesize insights across multiple domains: customer pain points, competitive positioning, market research, product capabilities, executive conversations, financial data.

The source material: 7 synthesis documents across several hundred KB. Think a few hundred pages of dense operational writing.

The approach was straightforward. Read all the documents. Synthesize the insights. Produce the analysis.

What happened: the agent read the first three documents fine. By document four, earlier material started getting compressed. By document six, the agent's analysis was shallow and generic. Cross-references between documents disappeared. Specific quotes became "as mentioned in an earlier document." Quantitative data rounded itself into oblivion.

The output was garbage. Not wrong, exactly. Just empty. A smart-sounding summary that could have been written by someone who'd read the Wikipedia pages instead of the actual source material.

This wasn't a model quality problem. The model was doing its best within a physical constraint. The context window was full, and the system was compacting aggressively to make room for the current operation. Every compaction cycle threw away evidence.

The Root Cause

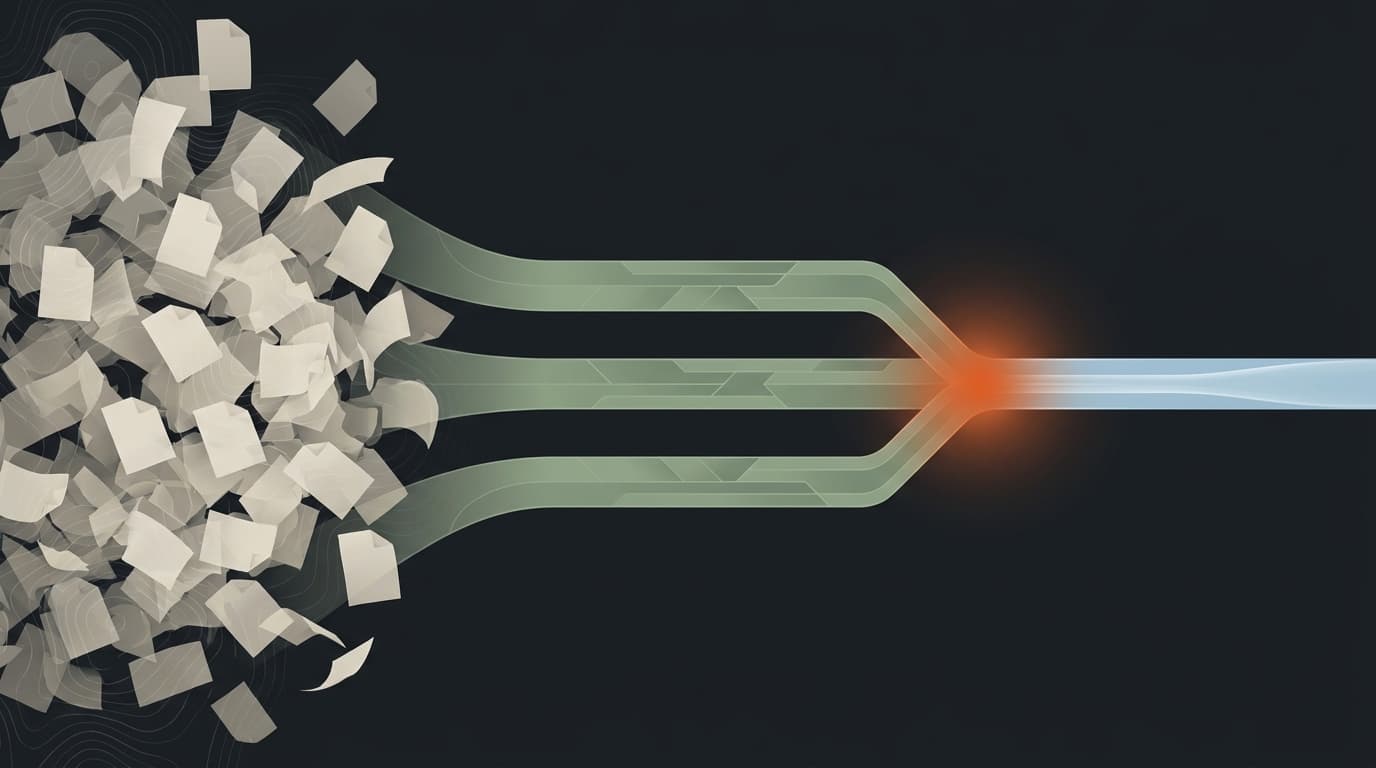

Reading source documents and reasoning about them are two fundamentally different operations competing for the same limited resource: the context window.

When you load thousands of lines of source material into context, you've used most of your working memory before you've done any thinking. The agent is trying to hold the complete picture while also synthesizing from it. That's like trying to hold a 400-page book in your head while writing an essay. Humans can't do it either. We take notes.

The AI equivalent of taking notes is writing to disk. But the default pattern (read everything, think about it, produce output) doesn't include a note-taking step. The agent tries to be a single machine processing a terabyte.

The fix was the same fix Google used: distribute the work across multiple agents, each handling a manageable subset, then synthesize from their outputs instead of the raw sources.

This problem is well-documented in research. Stanford and Meta published work on the "lost in the middle" phenomenon: LLMs reliably lose information placed in the middle of long contexts. The degradation isn't random. It's structural. And it gets worse as you add more material.

The Pattern

Source Documents (thousands of lines)

|

MAP PHASE (parallel)

|

+------+-------+-------+

| | | |

Agent 1 Agent 2 Agent 3

(2 docs) (3 docs) (2 docs)

| | | |

v v v v

Note 1 Note 2 Note 3

(~300) (~400) (~350)

| | |

+------+-------+

|

REDUCE PHASE

|

Synthesis Agent

(~1,050 lines in)

|

Final Output

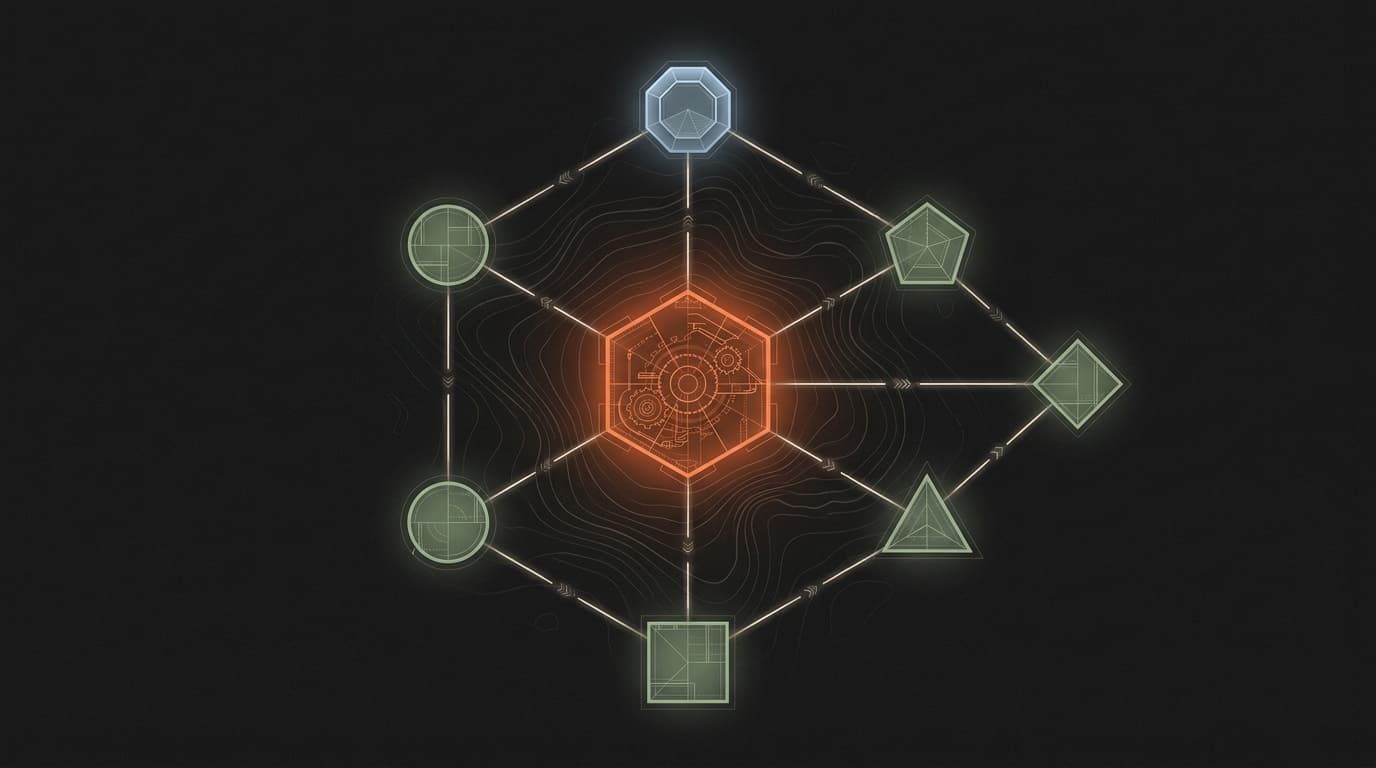

MAP phase. Multiple sub-agents run in parallel. Each reads 2-3 source documents (a manageable subset) and writes a condensed working note to disk. The working note preserves the key findings, specific evidence, important quotes, and quantitative data while discarding narrative filler.

Disk. The intermediate layer. Working notes are a few hundred lines each, written as files. This is the critical innovation. Instead of the synthesis agent reading thousands of lines of raw sources, it reads pre-distilled notes. The sub-agents already did the expensive work of identifying what matters.

REDUCE phase. A single synthesis agent reads only the working notes. Its context is clean. It has a thousand lines of pre-distilled intelligence instead of thousands of lines of raw material. It can focus entirely on cross-referencing, pattern detection, and insight synthesis. The operation it's doing (reasoning) gets the full context window for reasoning.

The output was dramatically better, because the evidence and quotes that compaction would have destroyed were preserved in the working notes on disk.

When It Breaks

The rule of thumb I use: if you're loading more than 8-12 source files into a single agent, you're asking for trouble. That's the budget I enforce per phase in my execution system.

But file count is a crude proxy. What actually matters is whether the agent has room to reason after loading the source material. Some files are 50 lines. Some are 2,000. A 5-file task with dense documents can exhaust context faster than a 15-file task with sparse ones.

The honest answer is that the threshold moves with every model release, every context window expansion, and every improvement to how models handle long inputs. What I can say from running this pattern across dozens of projects:

When context is comfortable: the agent produces specific, evidence-backed analysis with direct quotes and precise numbers from the source material.

When context is exhausted: the agent produces generic summaries, rounds numbers, loses quotes, and starts hedging. "As discussed earlier" is the tell. If you see that phrase, your context is full.

The transition between these states isn't gradual. It's a cliff. You're fine, you're fine, you're fine, and then the next document tips you over and the output quality craters.

The Failure Mode Nobody Warns You About

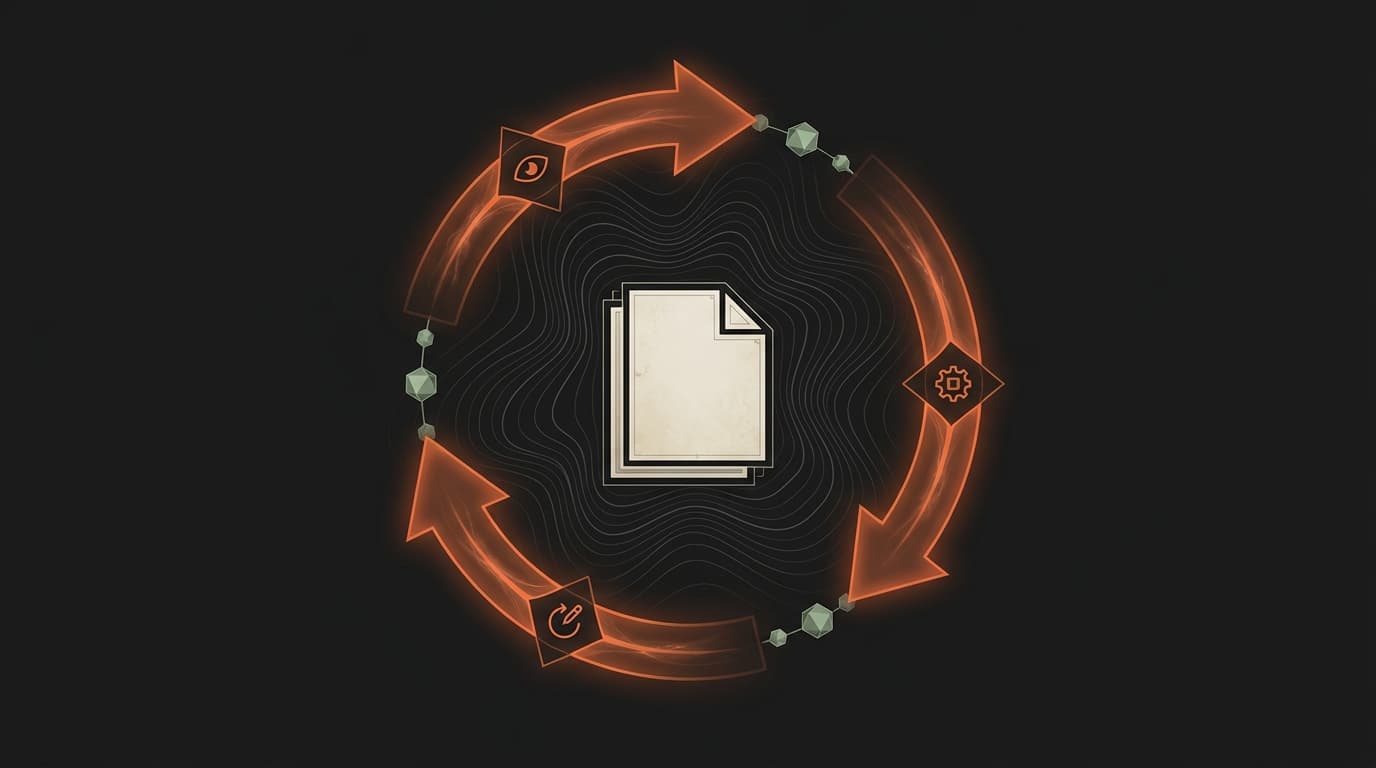

The most surprising failure I hit repeatedly wasn't context management. It was sub-agent persistence.

Sub-agents produce excellent analysis and then fail to save it.

This happened in multiple projects before I codified the rule: every sub-agent MUST write output to disk using an explicit file write operation. Background agent return messages are NOT reliably persisted.

What this looks like in practice: you launch three sub-agents to extract insights from source documents. The agents run, produce thoughtful analysis, and return their results through the agent communication channel. But the synthesis agent can't reliably access those return messages because they may be summarized, truncated, or lost during context management.

The fix is brutally simple. Every sub-agent writes a file. The synthesis agent reads files. Agent-to-agent communication goes through disk, not through conversation context. The filesystem is the message bus.

This is the same principle as file-based state for PRDs: conversations are unreliable. Files are permanent. Use files.

The Analogy That Makes It Click

Database administrators figured this out decades ago.

When a complex query hits a database repeatedly, you don't recompute the full result every time. You create a materialized view: a pre-computed snapshot of the expensive query, stored in a table. Future queries hit the materialized view instead of scanning the raw data.

Working notes are materialized views for AI agents. Each sub-agent "materializes" the expensive operation (reading and distilling thousands of lines of source material) into a compact representation on disk. The synthesis agent "queries" these materialized views instead of scanning the raw sources.

Same pattern, same reason: separate the expensive read from the analysis that depends on it. Pre-compute once, query the smaller result set repeatedly.

When Not to Use It

MapReduce has overhead. The MAP phase adds one extra step (launching sub-agents, waiting for them to write, verifying output files). For tasks with a handful of short source documents, this overhead isn't worth it.

It's also not the right pattern when the source material is homogeneous. If all your sources say the same thing from the same perspective, MapReduce doesn't add value because there's no diversity to preserve. Just read the documents directly.

And it doesn't help with quality problems that aren't context-related. If the analysis is wrong because the reasoning is wrong, MapReduce won't fix it. It only fixes the case where the reasoning is degraded because the context is exhausted.

One surprise finding: even with modest source material, multiple overlapping sources on the same topic benefit from MapReduce. When several documents discuss the same topic from different angles, the synthesis agent develops recency bias: later documents overwrite impressions from earlier ones, even when they're all in context. MapReduce fixes this because each sub-agent handles a distinct subset, and the synthesis agent sees all perspectives simultaneously in the working notes.

That said, the overhead is small (typically one extra phase in a multi-phase project, a few extra agent calls) and the quality difference is large. When in doubt, use MapReduce. The cost of unnecessary MapReduce is 15 minutes. The cost of context exhaustion is a failed analysis that takes hours to diagnose and redo.

The Upstream Fix: Organize Before You Split

MapReduce solves the execution problem. But there's an upstream problem it can't fix: if your source material is a flat folder of random files, even well-split sub-agents waste context on irrelevant content.

After burning through three different file organization schemes (flat folders, one giant synthesis doc, flat metadata tags), I landed on a three-tier hierarchy:

Tier 0: Foundation. A handful of canonical documents that define ground truth. Core positioning. Buyer personas. Product capabilities. Small, authoritative, rarely changed.

Tier 1: Domain synthesis. Rolled-up intelligence documents, one per domain. Customer pain points synthesis. Competitive intelligence synthesis. Each one aggregates insights from dozens of source documents into a single, manageable reference. These are what agents should read first.

Tier 2: Detail. Individual call transcripts, competitor profiles, market reports. The raw material. Agents only descend here when they need specific quotes or validation data that the synthesis doesn't cover.

The hierarchy solves two problems at once. First, agents read synthesis documents (Tier 1) instead of raw sources (Tier 2), which means they get pre-distilled intelligence without burning context on narrative filler. Second, when an agent does need to go deeper, the synthesis document tells it exactly which detail document to read — no brute-force search required.

Each document carries YAML frontmatter (pain points, personas, topics, priority level) so agents can query metadata to find relevant files instead of scanning contents. And a strict find-before-create rule prevents file sprawl: before creating any new document, search for an existing one covering the same ground. If you find a 70% match, update instead of forking.

This sounds like overhead. It's not. It's the difference between an agent that sounds like it read Wikipedia and one that sounds like it's been at the company for a year. The maintenance cost is real (a few hours a week keeping synthesis docs current), but the alternative — flat folders with no structure — costs zero maintenance and produces dramatically worse output.

The Broader Pattern

Context management is the infrastructure layer of AI-native operations. It's not exciting. It doesn't make for good demos. You can't tweet "we added context budgets to our execution system" and get engagement.

But it's the single highest-leverage architectural decision you'll make.

Across every project where the output was shallow, generic, or missing evidence it should have contained, the root cause was the same: too much source material in one context window. Not bad prompts. Not wrong instructions. Not model limitations. Physical context constraints producing degraded output. And the fix is always architectural (organize, then split, then synthesize), never "try harder."

If you're building AI-native operations and your outputs are occasionally shallow, generic, or missing evidence they should contain: check your context budget before you blame the model. The model is probably fine. The context window is probably full.