Every Friday, I publish a Baltimore family events newsletter. It covers 100-180 events per week across egg hunts, museum programs, tween hangouts, farm visits, and free community festivals. The question that used to eat my Friday mornings: which 15-20 events actually deserve the spotlight?

I used to do this manually. Skim the database, pick the ones that felt right, write the descriptions. It worked. But "felt right" doesn't scale, and I kept noticing the same bias: I'd over-index on events my own kids would like and under-represent the toddler crowd, the budget-conscious families, and the plan-ahead parents who need two weeks of lead time to get anything on the calendar.

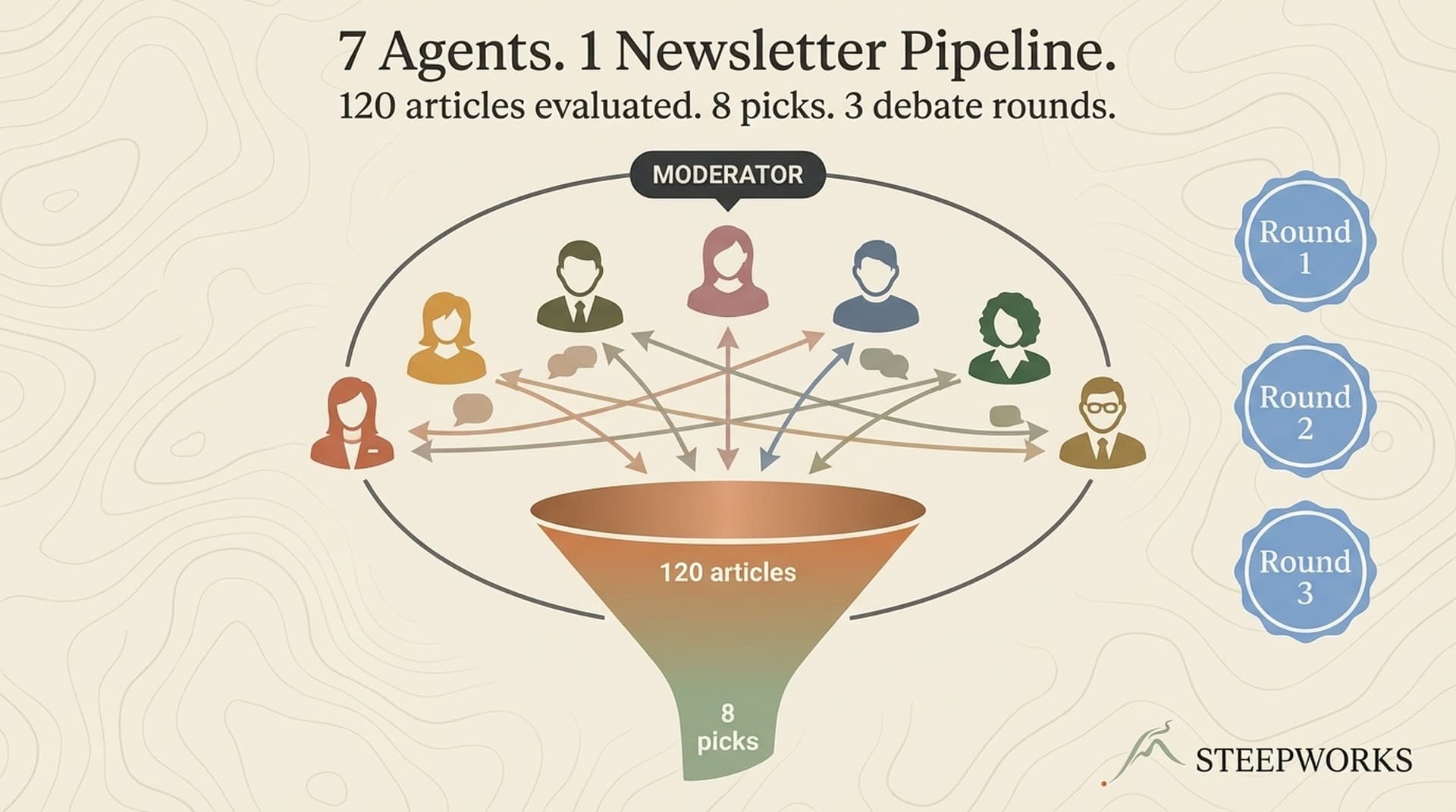

So I built a system where five AI agents — each representing a different parent archetype — independently evaluate every event, then debate each other in three structured rounds before a consensus score determines placement. The output is a scored, section-mapped newsletter draft that I edit in about 20 minutes.

Here's exactly how it works.

The Architecture: 10 Phases, 5 Agents, 3 Rounds

The pipeline runs as a single orchestrated session inside Claude Code (a pattern I expanded in 7 AI Agent Workflow Examples From a Live Newsletter Pipeline). No external API calls to manage, no separate inference endpoints. The agents are spawned as team members within one conversation, each with their own persona prompt, memory files, and evaluation constraints.

Supabase Query (148 events) + Victor's Editorial Mandates

↓

Team Setup (5 persona agents + neutral moderator)

↓

Round 1: Quick Takes (parallel, independent)

↓

Round 2: Tension Exploration (moderator-led, sequential)

↓

Round 3: Final Consensus (parallel)

↓

Consensus Scoring (formula applied)

↓

Section Assignment (priority cascade)

↓

Victor's Manual Insertions (this weekend + plan-ahead)

↓

Newsletter Draft (writer agent)

↓

Link Audit + HTML Generation

↓

Agent Evolution (memory updated, blind spots logged)

The critical design decision: agents debate before scoring happens. The scoring formula is applied to Round 3 positions, not Round 1. This means the debate actually changes outcomes — it's not decorative.

The Five Agents

Each agent is a fully realized parent persona with a specific editorial lens:

| Agent | Character | Core Question |

|---|---|---|

| Weekend Warrior | Sarah, 38 — kids 5 & 8, works full-time | "What's THE thing we should do this Saturday?" |

| Budget-Conscious | Marcus, 41 — three kids ages 4, 7, 11 | "What's the best bang for our buck?" |

| Toddler Wrangler | Jamie, 34 — 2-year-old, pregnant with #2 | "Can we actually do this with a toddler?" |

| Tween Entertainer | David, 45 — kids 10 & 12 | "Will they tell their friends or roll their eyes?" |

| Plan-Ahead Planner | Christina, 39 — Google Calendar power user | "What should I put on the calendar NOW?" |

These aren't vague "helpful assistant" prompts. Each agent has a multi-page profile covering their family situation, evaluation criteria, known blind spots, and voice. Jamie doesn't just check if an event says "all ages" — she evaluates whether there's a meltdown exit route, whether it fits a nap window (9-11am or 3-5pm), and whether she can realistically manage a stroller on that terrain.

David runs a "lame test" — will a 12-year-old tell their friends about this, or would they rather stay home? A petting zoo scores zero with David. A robot racing competition? That's a STRONG_PICK.

The Speech Act System

Agents don't just pick or skip. They express intensity through four speech acts:

- STRONG_PICK — "This is the one. Drop everything else."

- RECOMMEND — "Solid pick, would feature it."

- CONDITIONAL — "Good event, but only if [specific condition]."

- SKIP — "Not for my audience this week."

This granularity matters in scoring. A STRONG_PICK from the domain authority (David picking a tween event, Marcus picking a free event) carries more weight than a generic RECOMMEND.

The Three-Round Debate

Round 1: Quick Takes (Parallel)

All five agents evaluate every event simultaneously and independently. No agent sees another's picks. Each shares their top 5-8 events with reasoning, plus notable skips.

Sarah might open with: "I've gone through everything — the Irvine Nature Center egg hunt is the ONE thing I'd tell my mom friends about on Monday morning. Perfect Saturday morning energy, outdoor, the kids can run free while we hold coffee."

Meanwhile, Marcus is flagging the same event but noting the $15/kid admission: "Solid event but I need to be real — for a family of five, that's $75 before you factor in the gift shop gauntlet at the exit."

Round 2: Tension Exploration (Moderated)

This is where it gets interesting. A sixth agent — the moderator — analyzes Round 1 output and identifies specific tensions. The moderator isn't a persona. It has no family, no preferences, no editorial lens. Its only job is to find the productive disagreements and force the panel to resolve them.

The moderator identifies 3-4 tensions:

- Disagreements: Sarah STRONG_PICKed an event David SKIPped. Why?

- Lonely picks: Only Christina flagged an event. Is everyone else wrong, or is it niche?

- Coverage gaps: Nobody picked anything for budget families this week.

It sends targeted questions to specific agents: "Marcus, Sarah gave Irvine Nature Center a STRONG_PICK but you flagged the cost. Can you make the budget case for or against?"

Agents respond to each other by name. They change positions when persuaded. In one issue, Jamie originally SKIPped a helicopter egg drop event ("loud noises + toddler = no"), but after Sarah argued the spectacle value for older siblings, Jamie shifted to CONDITIONAL: "If you have a 5+ year old who would love it, bring noise-canceling headphones for the little one."

The success criterion for Round 2: At least 3 agents changed position on at least 1 event.

Round 3: Final Consensus (Parallel)

Each agent submits their final position table — every event, final speech act, and any position changes from the debate. Only Round 3 positions feed the scoring formula.

The Consensus Scoring Formula

Here's where qualitative debate becomes quantitative ranking:

Base Score: 50 points (every event that ANY agent picked starts here)

Agent breadth: +15 per additional agent beyond the first

Speech act: +10 per STRONG_PICK

Evidence depth: +5 per pick with 3+ evidence fields

Authority: +10 when a domain expert picks their specialty

Recency: -10 if featured in the past 4 weeks

Cap: 100 points

The authority bonus is the key mechanic. Each agent has a domain:

| Agent | Authority Domain |

|---|---|

| Jamie (Toddler Wrangler) | Baby/toddler/preschool events |

| David (Tween Entertainer) | Elementary/tween events |

| Marcus (Budget-Conscious) | Free events |

| Christina (Plan-Ahead) | Registration-required events |

When Marcus STRONG_PICKs a free event, that's 50 (base) + 10 (STRONG_PICK) + 10 (authority) = 70 even if no other agent touches it. The system trusts domain expertise.

A Real Scoring Example

From a recent issue, here's the actual consensus matrix the system produced:

| Event | Sarah | Marcus | Jamie | David | Christina | Score | Section |

|---|---|---|---|---|---|---|---|

| Irvine Nature Center | REC | SP | SP | - | - | ~95 | Top Pick |

| FVFAC Food Trucks Easter | SP | - | REC | REC | REC | ~90 | Quick Weekend Wins |

| Dinosaur Park | REC | SP | - | SP | - | ~90 | Quick Weekend Wins |

| Clark's Elioak Farm | SP | COND | SP | SKIP | REC | ~90 | Toddler |

| Magnolia Farms | SP | COND | - | SKIP | SP | ~85 | Ticketed |

| Arise Easter Event | - | REC | SP | - | REC | ~80 | Toddler |

| Brooklyn Park Egg Hunt | COND | SP | COND | - | - | ~75 | Budget |

| Harvester Baptist | - | SP | REC | - | - | ~75 | Budget |

| Robot Races | SKIP | - | - | SP | REC | ~75 | Tween |

| Easter FRESH | REC | - | - | COND | COND | ~70 | Also This Weekend |

| Home Depot Workshop | COND | REC | - | SKIP | - | ~65 | Local Intel |

| KidStock (plan-ahead) | - | - | - | - | SP | ~65 | Mark Your Calendar |

| Mr. Trash Wheel (plan-ahead) | - | - | - | - | REC | ~55 | Mark Your Calendar |

Read this table column-by-column and you see five distinct editorial perspectives. Read it row-by-row and you see consensus emerging. Irvine Nature Center hit 95 because three agents independently flagged it with strong speech acts. Mr. Trash Wheel scored 55 because only Christina caught it — but Christina's job is to catch plan-ahead events everyone else misses, so it still earns a section slot.

Notice Clark's Elioak Farm: David SKIPped it ("petting zoo, my kids would mutiny"), Marcus gave it a CONDITIONAL ("$16/kid adds up"), but Jamie and Sarah both STRONG_PICKed it and Christina RECOMMENDed it. The system correctly routes it to the Toddler section — David's skip doesn't kill it, it just means tweens aren't the audience.

Section Assignment: Priority Cascade

Events don't just land in sections by score. There's a priority cascade:

- Top Pick (score 90-100, 4+ agents agree) — 1 event, rarely 2

- Quick Weekend Wins (75+, 3+ agents including Sarah) — 3-4 events

- Ticketed This Weekend (75+, Christina flags registration) — 2-3 events

- Budget-Friendly Finds (75+, Marcus flags free) — 2-3 events

- For Toddler Families (60+, Jamie exclusive picks) — 2-3 events

- Tween-Approved (60+, David exclusive picks) — 2-3 events

- Mark Your Calendar (60+, Christina only, 2+ weeks out) — 2-3 events

- Also This Weekend (40-69, unclaimed) — 8-12 one-liners

Once an event is claimed by a higher-priority section, it can't appear lower. Top Pick wins absolutely. The specialty sections (Toddler, Tween) only receive events not already claimed upstream.

This solves a real editorial problem: without the cascade, a great free toddler event would appear in Budget, Toddler, AND Weekend Wins. The cascade forces a single placement that respects the event's strongest signal.

The Human Layer: Editorial Mandates and Manual Insertions

The agents don't operate in a vacuum. Before any agent is spawned, I feed in editorial mandates for that issue — must-include events and exclusions. Maybe a venue reached out about a new program. Maybe I went to something last weekend and know it's worth featuring. Maybe there's a seasonal event the scrapers haven't caught yet. Those go in as mandates, and every agent sees them alongside the database query results.

But the more interesting part happens after the agents finish. I review the consensus matrix and the draft, and I add events the agents missed entirely. The system tracks these as "manual additions" — blind spots in the persona filtering that no agent caught. Sometimes it's an event that fell through the scraper cracks. Sometimes it's something I know about from living here that doesn't show up in any database.

These insertions go into two places:

This weekend insertions — events I'm featuring regardless of agent consensus. They get woven into the appropriate section based on type (free goes to Budget, toddler-specific goes to Toddler Families, etc.) but bypass the scoring formula entirely. My editorial judgment overrides the matrix when I have signal the agents don't.

Plan-ahead insertions — events 2-4 weeks out that need early visibility. Registration deadlines, events with sellout history, seasonal programs that open booking windows. Christina (the Plan-Ahead agent) catches many of these, but she can only work with what's in the database. I catch the ones that come from local knowledge, parent group chatter, or direct outreach from venues.

The system explicitly logs these manual additions so the agents can learn from them. After each issue, the evolution phase records which events I added that no agent suggested — and those blind spots feed back into the next issue's evaluation. Over time, the agents get better at catching what I used to add manually.

The Part Nobody Talks About: Agent Evolution

After every issue, every agent's memory gets updated. This is what makes the system compound rather than reset each week.

Each agent maintains:

- Learning rules — evaluation patterns that proved correct

- Event angle patterns — framing approaches that survived my editing

- Logistics tips bank — verified venue-specific practical knowledge

- Voice signatures — phrases I kept vs. rewrote

- Forbidden patterns — things I consistently removed

The evolution quality gate: every new learning must name the specific teammate who taught it. Jamie can't just add "I learned to consider older siblings." She has to write: "Sarah's argument about spectacle value for 5+ year-olds changed my evaluation of loud outdoor events — I now give CONDITIONAL instead of SKIP when there's a clear age split."

The team also maintains cross-persona patterns — 10 pairwise relationship entries tracking how agents influence each other. Over 12 issues, Marcus and Jamie developed a reliable dynamic: Marcus identifies free events, Jamie stress-tests them for toddler feasibility, and their combined endorsement almost always produces a Budget section hit.

What This Actually Produces

The newsletter that lands in inboxes reads like a single editorial voice — no agent names, no scoring jargon, no debate transcripts. Just a parent who somehow evaluated 148 events and picked the 18 that matter most, organized by what kind of family you are and what kind of weekend you want.

But behind that single voice is a structured disagreement process that catches my blind spots every single week. The system finds toddler gems I'd overlook (I don't have a toddler anymore). It flags plan-ahead events I'd miss because I'm focused on this Saturday. It surfaces tween options that wouldn't register for a parent of younger kids.

The consensus matrix is the artifact that makes it work. Not because the math is sophisticated — it's deliberately simple. But because it forces five different evaluation lenses to resolve into a single ranked output, and it does it transparently enough that I can audit any placement decision in 10 seconds.

The Technical Stack

For those who want to build something similar:

- Data layer: Supabase (PostgreSQL) with event scrapers feeding ~500 events/week

- Orchestration: Claude Code with Team Mode (5 persona agents + neutral moderator)

- Agent framework: Each agent is a markdown persona file + evolved overlay + persistent memory

- Scoring: Deterministic formula applied post-debate (no AI in the scoring step)

- Output: Markdown draft → Beehiiv HTML email + website page

- Evolution: JSON memory files updated after every issue, versioned in git

The entire pipeline runs in one Claude Code session. No external API management, no inference endpoint juggling, no vector database. The agents share a conversation context, which means Round 2 debates reference actual Round 1 quotes — not summaries of summaries.

Total cost per issue: roughly one extended Claude session. The evolution files are the only persistent state — everything else rebuilds from the database query each week.

What I'd Do Differently

Start with three agents, not five. The first version had three personas and worked fine. I added Tween and Plan-Ahead when I noticed consistent gaps in the three-agent output. Build for the gaps you observe, not the architecture you imagine.

The debate round matters more than the scoring formula. I spent too long tuning point values and not enough time on Round 2 moderation prompts. The quality of the tensions the moderator surfaces determines the quality of the position changes, which determines whether the final scores reflect genuine editorial judgment or just vibes.

Agent memory is the moat. After 12 issues, my agents know that the Maryland Zoo's "Breakfast with the Bunny" sells out 3 weeks early (Christina learned this the hard way in Issue 4). They know that Lowe's Build & Grow is free but has a 30-minute wait that makes it a hard sell for toddler families (Marcus and Jamie figured this out together in Issue 7). This accumulated knowledge doesn't exist in any database field — it lives in the evolved overlays and gets applied automatically.

I write about building AI systems that do real work — not demos, not proofs of concept. If this is your kind of thing, STEEPWORKS Weekly covers the intersection of AI and go-to-market every week, built by a similar multi-agent system.

This workflow runs on Claude Code for GTM — the same stack that powers every STEEPWORKS skill and agent.