We accidentally deleted 194 high-value contacts from our CRM.

Not junk contacts. Not old trial signups. High-value contacts at companies in our ideal customer profile. The kind of contacts that take months of relationship building to earn. Suddenly invisible.

The deletion happened during a routine cleanup. An automated filter caught more records than expected. Nobody reviewed the full list before executing. The system did exactly what it was told. We told it wrong.

We rebuilt everything. Not just the contact data. The entire approach to CRM operations.

The new system deduplicates tens of thousands of contacts and companies across five matching layers. It has a 9-check decision tree, a 7-point protection framework, canary batches, kill switches, monitoring pauses between batches, and rev ops sign-off requirements.

And zero automated writes.

Every merge. Every deletion. Every field update. Produced as a CSV file that a human reviews manually before triggering execution. The scripts produce the plan. It's not perfect, but a person pulls the trigger.

This is what paranoid-by-design CRM operations looks like.

Why Paranoia Is Architecture

CRM data is different from most data an AI system handles. When an AI generates bad copy, you rewrite it. When an AI produces a bad analysis, you redo it. When an AI deletes or merges the wrong CRM records, you've corrupted data showing relationships that took months to build. This can break downstream automations, pollute attribution data, and potentially violate data handling agreements.

CRM operations are irreversible in practice. Yes, you can restore from backups. But the cascade of broken associations, orphaned activities, disrupted workflows, and confused sales reps creates damage that no restore fully undoes.

The 194-contact incident taught us this viscerally. We recovered the contacts, but the activity histories were disrupted and the trust in our data quality took a hit.

After that, we adopted a principle: AI handles the analysis, humans handle the execution.

The Five Matching Layers

Deduplication sounds simple until you try it. "John Smith at Acme Corp" appears as "John Smith" with email john@acme.com, "J. Smith" with email jsmith@acme.io, and "John C. Smith" with LinkedIn /in/johnsmith and no email. But maybe the linkedin is https instead of http.

Same person. Three different records. No single field matches exactly. The permutations are endless.

The dedup engine runs five matching layers, each catching duplicates the previous layers miss:

Layer 1: Exact email match. If two contacts share the same email address, they're duplicates. This catches the obvious cases. ~20% of total duplicates.

Layer 2: Normalized LinkedIn match. LinkedIn URLs come in dozens of formats: with /in/, without, with trailing slashes, with country prefixes, URL-encoded. Normalize to a canonical form and match. This catches contacts who used different emails at different companies but kept the same LinkedIn profile. ~15% of duplicates.

Layer 3: Fuzzy name + company match. Levenshtein distance on first name, last name, and company name with configurable thresholds. "John Smith at Acme Corp" matches "Jon Smith at Acme Corporation." This is where false positives become a risk, so every match gets a confidence score and anything below threshold goes to the REVIEW tier instead of auto-merge. ~30% of duplicates.

Layer 4: Domain association. If two contacts have the same email domain and similar names, they're likely the same person at the same company. Catches cases where company name is entered differently but the domain is consistent. ~20% of duplicates.

Layer 5: Company name match. At the company level, "Acme Corp" and "Acme Corporation" and "ACME" and "acme.com" all need to resolve to the same entity. Name normalization, domain matching, and a parent/subsidiary check that blocks merges when employee counts diverge by more than 5x (prevents merging a subsidiary into its parent). ~15% of duplicates.

Each layer runs independently. The outputs merge into a unified duplicate report sorted by confidence score, with tier assignments for each pair.

Next up for us is extending de-dupe into activity records.

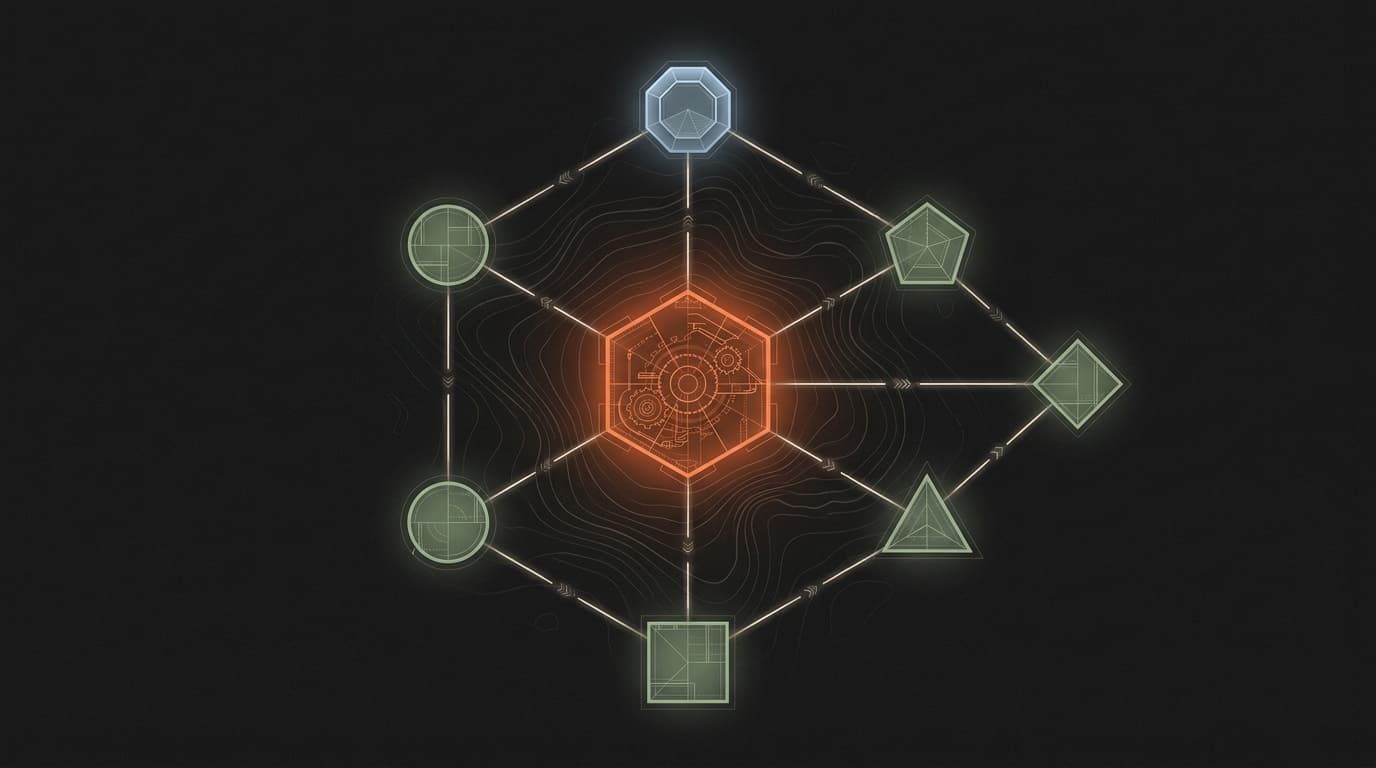

The Four-Tier Classification

Every contact in the CRM gets one of four classifications:

PROTECTED_HIGH. Active deals, open opportunities, MQLs, SQLs, contacts with recent meeting activity, champion-tagged contacts. These records cannot appear in any deletion set under any circumstances. The system doesn't ask. It blocks.

PROTECTED_STANDARD. ICP-fit contacts (scored Tier 1-3), contacts with any pipeline history, contacts with 3+ logged activities. These require explicit review if they appear in a merge or deletion scenario. Default action: keep.

REVIEW. Contacts that don't clearly belong in either protected tier or deletion candidacy. Low activity, uncertain ICP fit, duplicate candidates with medium confidence. A human reviews these individually.

DELETE_CANDIDATE. Contacts that fail all 9 decision tree checks. No ICP fit. No pipeline history. No recent activity. No deal association. No logged meetings. No email engagement. These are the records that enrichment tools auto-push at high volume that never converted to anything.

The 9-check decision tree evaluates: ICP score, deal association, pipeline history, activity recency, email engagement, meeting history, list membership, lifecycle stage, and lead source quality. A contact must fail ALL 9 checks to reach DELETE_CANDIDATE. One passing check is enough to route to REVIEW.

The Kill Switches

Four conditions that stop all operations immediately:

Any protected record appears in a delete set. If a PROTECTED_HIGH or PROTECTED_STANDARD contact shows up in a deletion batch, something is wrong with the classification logic. Full stop. Investigate before continuing.

Pipeline-relevant counts drop unexpectedly. After each batch, compare pipeline-relevant counts against pre-operation baselines. If any count decreases, a record with pipeline value was affected. Full stop.

API failure rate exceeds 2%. If more than 2% of API calls in a batch fail, the system is experiencing instability. Full stop. Don't continue operations against an unstable API.

Data reconciliation mismatches. After each batch, run a reconciliation query between your CRM and any mirrored databases. If any mirrored record references a CRM ID that no longer exists, the deletion created an orphan. Full stop.

These aren't graceful degradation. They're hard stops. When a kill switch fires, the answer is never "continue with the remaining records." The answer is always "investigate, identify root cause, fix, and restart the batch from zero."

The Canary Protocol

Don't run full batches on day one.

Batch 1: 200 contacts (canary). Review results individually. Verify no unexpected deletions. Check all reconciliation queries. If clean, proceed.

24-hour monitoring pause. Watch for downstream effects. Broken workflows. Missing deal associations. Sales team complaints about missing contacts. Anything unexpected.

Batch 2+: 500 contacts each. Each batch requires a fresh manual invocation. The script doesn't auto-continue. Between each batch: reconciliation check, count comparison, and sales leadership sign-off.

The 24-hour pause isn't about technical verification. It's about human verification through daily workflows. The pause catches situations where a sales rep says "where did my contact go?" the next morning.

The Enrichment Inflow Problem

Here's the upstream problem that makes all of this necessary.

Most B2B teams use enrichment tools that auto-push new contacts to the CRM. These tools push at volume: 50-100 new contacts per day is common. Most of these contacts are low-quality: wrong ICP, wrong persona, wrong geography. But they all land in the CRM because the push is configured at the integration level, not the contact level.

This means your CRM accumulates roughly 1,500-3,000 junk contacts per month. After 12 months, that's tens of thousands of records of which maybe 10% have any pipeline relevance.

The cleanup isn't a one-time project. It's a recurring operation. As part of this work, you need to seal the inflow: pause auto-push, evaluate per-contact push criteria, and monitor the new contact rate.

Without solving the inflow problem, deduplication is Sisyphean. You clean the data, and 100 new junk records arrive tomorrow.

What This Actually Looks Like

Monday morning. The dedup engine ran over the weekend in analyze mode. It produced:

- A CSV of duplicate pairs sorted by confidence score (0.95 to 0.55)

- A CSV of DELETE_CANDIDATE contacts that failed all 9 checks

- A summary showing 0 PROTECTED_HIGH contacts in either list

- A flag that 3 PROTECTED_STANDARD contacts appeared in the duplicate merge list (routed to REVIEW)

I open the DELETE_CANDIDATE CSV. Spot-check 50 records across the confidence distribution. Verify the 3 flagged PROTECTED_STANDARD records and decide: keep them unmerged.

I message the head of sales with the merge pairs and deletion candidates. They review the top 100, confirm the pattern matches their expectations, sign off.

I run the canary batch: first 200 deletions. Check reconciliation. Wait 24 hours. No complaints from sales.

Wednesday: next 500. Check. Wait.

Friday: remaining batch. Check. Wait.

Following Monday: full re-export. Pre-cleanup total minus deleted equals current count. All ICP contacts confirmed present.

This takes a week. An automated system could do it in an hour. But an automated system is what deleted 194 contacts last time.

The week is worth it.

The Principle

AI is excellent at pattern matching, duplicate detection, confidence scoring, and classification. It can process tens of thousands of contacts faster and more consistently than any human.

AI is terrible at absorbing the downstream consequences of getting a CRM operation wrong. It doesn't feel the sales rep's frustration. It doesn't understand that a contact represents six months of relationship building. It doesn't know that deleting a "low-activity" contact who happens to be an executive's assistant will close a door that took a year to open.

Humans are slow at analysis and fast at consequence reasoning. AI is fast at analysis and blind to consequences.

Design the system accordingly. Let AI do what it's good at (analysis) and humans do what they're good at (judgment about consequences). Connect them with files, not automation.

The scripts produce the plan. A person pulls the trigger.