title: "Anti-Slop: 15 Patterns I Check Before Any AI Content Ships" slug: anti-slop-15-patterns seo_keyword: "AI content quality" meta_description: "AI content quality checklist: 15 anti-slop patterns from 1,270+ skill invocations. Em-dashes, tricolons, filler openers, and 12 more AI tells." og_description: "Last week I caught 'It's worth noting' three times in a single draft. Each time, the sentence after it was stronger without it. Here are the 15 anti-slop patterns I check before anything ships." cluster: content-operations author: Victor status: published published_date: 2026-03-26 read_time_minutes: 14 description: "Anti-Slop: 15 Patterns I Check Before Any AI Content Ships" domain: steepworks type: article updated: 2026-03-26

Anti-Slop: 15 Patterns I Check Before Any AI Content Ships

The Draft That Almost Shipped

Last week I caught "It's worth noting" three times in a single draft. Each time, the sentence that followed was stronger without it. The phrase was doing nothing except signaling "an AI wrote this."

I deleted all three instances. Saved 14 words. The piece read better. And I almost didn't catch them, because I was reading for substance, not for tells.

I use AI to produce content every day. Research, first drafts, structural outlines, editing passes. AI is in every layer of how I work. That's not the problem. The problem is that AI has tells, and those tells erode the one thing content is supposed to build: trust.

AI content quality is no longer optional. The Antislop research paper (Dekoninck et al., ICLR 2026) found that some slop patterns appear over 1,000x more frequently in LLM output than human text. That's not a subtle difference. That's a fingerprint.

More articles are now written by AI than humans (Graphite, Nov 2025). About 17% of the top 20 Google results are AI-generated (Originality.ai). When AI content is the majority, the differentiator isn't whether you use AI. It's whether your readers can tell.

This isn't editorial aesthetics. When your prospects read five AI-generated competitor posts before they reach yours, the one that reads like a human wrote it earns the trust. That trust converts to pipeline. Slop doesn't just look bad. It costs you the deal where the prospect chose the company whose content felt like it came from someone who actually does the work. I've watched it happen. A prospect told me they picked our proposal over a competitor's partly because "your content sounded like someone who actually runs this stuff." The competitor's blog had better SEO. Ours had better voice.

Wikipedia's "Signs of AI Writing" is the best detection resource on the internet. TechCrunch called it exactly that. But detection isn't enough when you're shipping 20 posts a month. You need the fix for each pattern, and you need a workflow where each month's content comes out cleaner than the last. That's what these 15 patterns provide: detection, remediation, and a system that compounds.

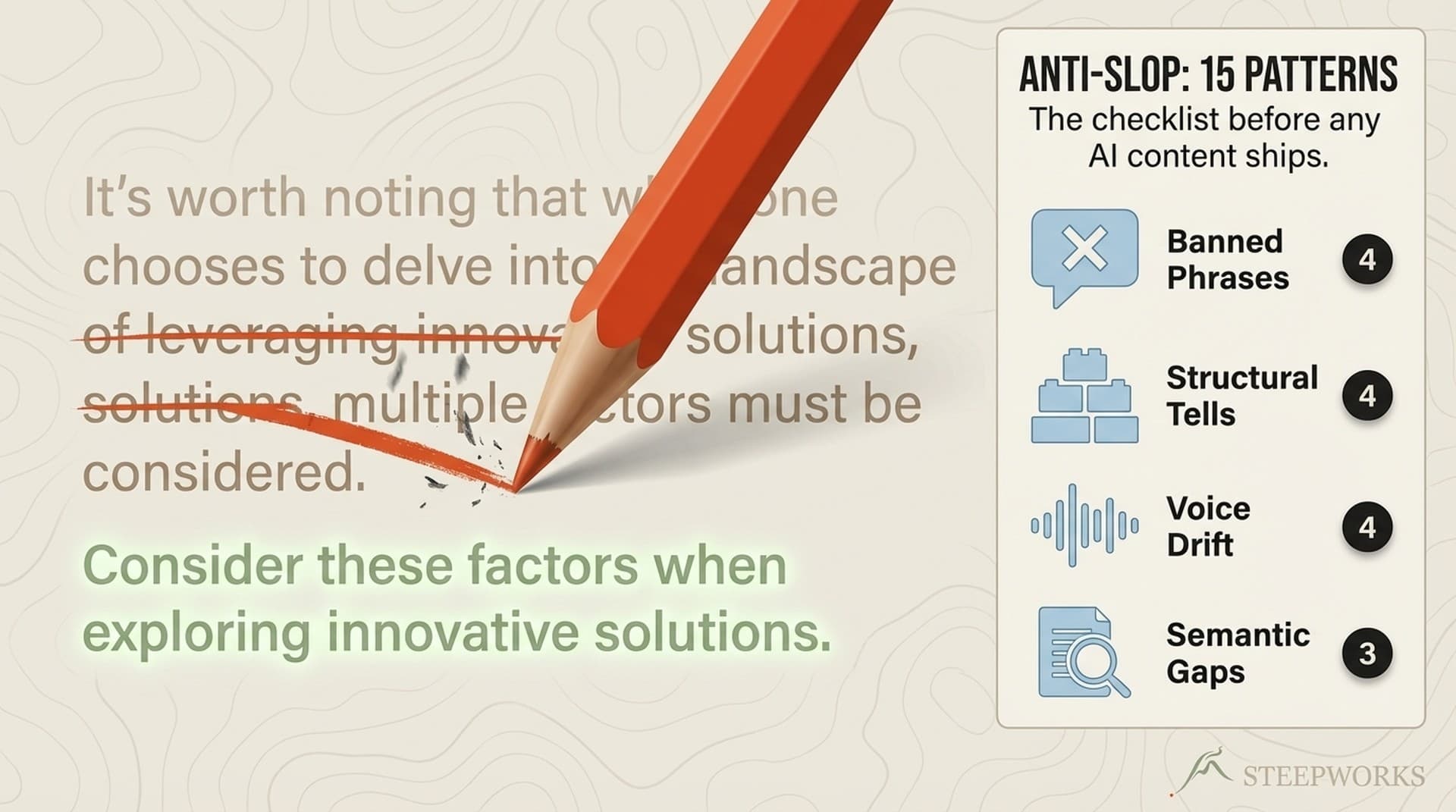

Over 2.5 years of shipping AI-assisted content, I've built a checklist. 15 patterns across four categories. Here they are.

Category 1: Banned Phrases (Patterns 1-4)

The most immediately actionable category. Phrases that should never appear in published content. Each one fails because it adds words without adding meaning, signals AI authorship to attentive readers, or both. They're the content equivalent of a watermark.

Pattern 1: Filler Openers

What it looks like: "It's worth noting that..." "It's important to recognize that..." "What this means is..."

Why it's bad: These phrases promise the reader something important is coming, then deliver the sentence that was already going to follow. They're verbal throat-clearing. Remove the opener and the sentence is always tighter. I ran a count across 20 AI-generated first drafts last quarter. Average filler openers per 2,000-word piece: seven. Readers who've spent any time with AI-generated content will clock these instantly.

The fix: Delete the opener. Start with the substance. "It's worth noting that revenue dropped 15% in Q3" becomes "Revenue dropped 15% in Q3." If the fact is worth noting, note it. Don't announce that you're noting it.

Pattern 2: Abstract Framing Words

What it looks like: "This represents a paradigm shift..." "The tension between X and Y..." "This paradox reveals..." "This dichotomy validates..."

Why it's bad: Abstract framing words perform analysis without doing any. They sound intellectual but communicate nothing specific. "This represents a paradigm shift" tells the reader you think something is important. It never tells them why. Wikipedia's editors identified "overemphasis on importance" as one of the most common AI writing signs (Wikipedia: Signs of AI Writing).

The fix: Replace with cause and effect. "This represents a paradigm shift in content marketing" becomes "Companies that used to publish twice a month now publish twice a day, and the editorial review that used to catch errors doesn't exist anymore." Show the shift. Don't label it.

Pattern 3: Generic Enthusiasm

What it looks like: "Revolutionary approach..." "Game-changing solution..." "Transformative impact..." "Cutting-edge technology..."

Why it's bad: These words have been drained of meaning by three decades of marketing copy and two years of LLM output. They trigger immediate skepticism in experienced B2B readers. A VP of Marketing who sees "game-changing" is less likely to keep reading, not more.

The fix: Replace with specific claims. "Game-changing analytics platform" becomes "Identifies at-risk deals 14 days before they go dark." The specific claim is both more credible and more useful. If you can't replace the enthusiasm with a specific claim, the enthusiasm was covering for a lack of substance.

Pattern 4: Formal Transitions

What it looks like: "Furthermore..." "Moreover..." "Subsequently..." "Additionally..." "Consequently..."

Why it's bad: These transitions signal academic or AI-generated text. Humans use simpler connectors or just start the next sentence. Nobody says "furthermore" in conversation. Nobody writes it in a good email. It creates distance between writer and reader.

Formal transitions are one of the highest-frequency AI tells. Wikipedia's editors flagged them as a primary detection signal, and any reader who's spent time reviewing AI drafts will recognize the register instantly. Your content stops sounding like you and starts sounding like a language model being polite.

The fix: "Also," "And," "So," "Then," or just start the next sentence without a transition. If the connection between sentences isn't clear without a formal transition, the problem is the logic, not the connector. Fix the logic.

Category 2: Structural Patterns (Patterns 5-8)

The patterns that survive phrase-level editing. You can scrub every banned phrase and still produce obviously AI-generated content if the structure is wrong. These are harder to detect and harder to fix.

Pattern 5: Tricolon Abuse

What it looks like: Everything comes in groups of three. "Speed, quality, and reliability." "Research, analyze, and execute." "Faster. Smarter. Better." Three adjectives, three bullet points, three examples, three everything. Consistently. Throughout the entire piece.

Why it's bad: The rule of three is a real rhetorical device. But AI uses it as a default, not a choice. When everything is a tricolon, nothing has emphasis. The rhythm becomes monotonous. Readers sense the pattern even if they can't name it. Charlie Guo's "Field Guide to AI Slop" specifically identified "default groupings of three" as a tell.

The fix: Vary your groupings. Use two items. Use four. Use one and let it stand alone. Save the tricolon for moments where three is genuinely the right number. When you do use three, make the third item surprising, not a predictable continuation of the first two. I wrote "faster, cheaper, and terrifyingly autonomous" in a recent piece. The third item breaks the rhythm on purpose. That's a tricolon earning its keep.

Pattern 6: Aphorism Endings

What it looks like: Sections that close with a fortune-cookie statement. "After all, the best content is content that connects." "In the end, quality speaks for itself." "Because at the end of the day, people buy from people they trust."

Why it's bad: These endings attempt to create closure and wisdom. But they're vacuous. They say nothing the reader didn't already believe before reading your article. Aphorism endings replace insight with sentiment. They're the written equivalent of a motivational poster.

The damage is specific: experienced readers lose trust at the exact moment you're trying to leave an impression. A VP of Marketing who's read 200 blog posts this quarter will unconsciously tag your content as "AI filler" when the closing is a platitude instead of a takeaway she can use.

The fix: End sections with your most specific point, not your most general one. If the section is about email personalization, end with "The emails that got replies referenced the prospect's last quarterly earnings call, not their job title." That's a specific, earned insight. "At the end of the day, personalization is what matters" is a platitude.

Pattern 7: Em-Dash Saturation

What it looks like: "AI tools -- including research and writing assistants -- generate outputs that require -- at minimum -- human review before publication." Multiple em-dashes per paragraph, used as all-purpose interrupters.

Why it's bad: Em-dashes are legitimate punctuation. But AI uses them at 3-5x the rate of human writers. They've become the single most recognizable AI writing tell. The Antislop researchers cataloged em-dash overuse as one of the patterns appearing 1,000x more frequently in LLM output than human baselines.

The fix: Maximum 1-2 em-dashes per article. For parenthetical information, use actual parentheses. For lists within sentences, use "including" or "whether via." For dramatic pauses, use a period and start a new sentence. The dramatic pause of a full stop is more powerful than an em-dash anyway.

Pattern 8: Colon-Sentence Construction

What it looks like: "The result: generic content fails." "Here's the key insight: quality matters more than quantity." "The bottom line: invest in editorial review." (See also: content review agent)

Why it's bad: AI models trained to avoid em-dashes overcorrected into colon-sentence constructions. They perform the same function (creating dramatic pauses and signaling "important point ahead") but they've become equally predictable. The pattern is recognizable because it's mechanical: every "important" conclusion gets the same colon runway. (See also: quality gate)

Your readers see the structure repeating and start skimming, which is the opposite of what the dramatic pause was supposed to achieve. Maximum 2-3 per article before readers notice. (Claude for operators)

The fix: Just state the fact. "The result: generic content fails" becomes "Generic content fails." The colon-sentence is a runway. Cut the runway. (See also: anti slop)

Category 3: Voice Violations (Patterns 9-12)

Slop that lives in the register, not the syntax. These patterns make content sound like it was written by a persona, not a person. They're the hardest to automate away because they require judgment about who the writer is and who they're not.

Pattern 9: Guru Language

What it looks like: "Unlock the secrets to..." "Master the art of..." "Discover the hidden framework..." "Transform your approach..."

Why it's bad: Guru language promises revelation. B2B content should deliver information. When a VP of Marketing reads "unlock the secrets," they hear a course pitch, not a peer sharing what they've learned. It positions the writer as above the reader, which breaks the practitioner solidarity that builds trust.

The fix: Replace revelation with observation. "Unlock the secrets to better content" becomes "Here's what I've found works after testing 40 different editorial workflows." The observation is both more credible and more useful. It invites engagement instead of worship.

Pattern 10: Hustle Culture Contamination

What it looks like: "10x your content output..." "Crush your competitors..." "Growth hack your editorial process..." "Grind through your content calendar..."

Why it's bad: Hustle culture language alienates the exact audience B2B content serves: experienced operators who've been through enough hype cycles to be allergic to it. It signals inexperience. Nobody who has actually scaled a content operation talks about "crushing" the competition. They talk about publishing consistently and measuring what works.

The fix: Replace intensity with specificity. "10x your content output" becomes "We went from 4 posts per month to 12 by automating research and first drafts." The specific claim is more impressive and more believable than the multiplier. Experienced readers trust numbers. They distrust round-number multipliers.

Pattern 11: Consulting-Speak Contamination

What it looks like: "Leverage your content assets..." "Optimize stakeholder alignment..." "Synergize cross-functional inputs..." "Drive holistic content strategy..."

Why it's bad: Consulting-speak creates distance. It makes a blog post read like a slide deck. Your audience is operators who DO things, not consultants who ADVISE on things. Every piece of consulting jargon puts another layer of abstraction between the writer and the reader. The reader came for a specific answer. Don't make them translate from McKinsey-speak to get it.

The fix: Use the verb the operator would use. "Leverage your content assets" becomes "Reuse your best-performing posts." "Optimize stakeholder alignment" becomes "Get your VP to agree on the content calendar." The simpler version is always clearer and usually shorter.

Pattern 12: Passive Authority Voice

What it looks like: "Research suggests..." "Studies have shown..." "It has been demonstrated that..." "Experts agree..."

Why it's bad: Passive authority voice sounds objective but communicates nothing. Which research? Which studies? Which experts? When AI uses these phrases, it's almost always because the underlying model doesn't have a specific source. The EBU/Reuters study found AI assistants had accuracy issues in 45% of news responses, partly because they cite authority without having it. B2B readers have been trained to distrust uncited authority claims.

The fix: Either cite the specific source or own the opinion. "Studies show that content quality matters" becomes "BCG's 2025 study of 1,250 firms found that only 5% achieve AI value at scale" or "In my experience, quality beats volume every time." Both are more honest than hiding behind vague authority.

Category 4: Content Sins (Patterns 13-15)

The deepest layer. These aren't about how the words read. They're about whether the content is honest. These patterns survive even aggressive editorial review because they look professional on the surface. (Knowledge OS guide)

Pattern 13: Fabricated Precision

What it looks like: "Companies that implement this approach see a 47% improvement in engagement." "Teams report 3.2x faster content production." No source. No methodology. No sample size. Just a precise-sounding number that appeared from nowhere.

Why it's bad: AI generates plausible-sounding statistics. Readers know this. Every uncited metric in your content now carries the implicit question: "Did an AI make this up?" The EBU/Reuters study found accuracy failures in 20% of AI responses. Fabricated precision is the content version of hallucination, and it's the slop pattern most likely to damage your credibility permanently.

The fix: Every number needs a source or an honest label. "Our research shows 47% improvement" (with methodology), "[Estimated from internal data]," or just remove the fake number and state the claim qualitatively: "Teams that automate research consistently ship faster." The qualitative claim is more honest and nearly as persuasive.

Pattern 14: Stat Recycling

What it looks like: The same statistic appears 3-4 times throughout a piece. "78% of teams struggle with content quality" in the intro, then again in section 3, then again in the conclusion. Same number, slightly different framing each time.

Why it's bad: AI anchors on a strong statistic and reuses it because it's the most relevant data point in its context window. But repeating a stat makes content feel thin, like the writer only had one fact and used it as wallpaper. It also makes the piece feel like it was built to support a single number rather than built from genuine analysis.

The fix: First use: cite the stat with its source and full context. Subsequent references: use a vivid metaphor instead. "78% of alerts go uninvestigated" the first time. "The triage paralysis that no new tool can dig them out of" the second time. The metaphor is more memorable and avoids the wallpaper effect.

Pattern 15: Section-Level Pitch Slapping

What it looks like: An entire paragraph or section that could be lifted directly into a sales deck without modification. Problem framing that perfectly sets up your product. Language like "market category failure," "fundamental limitation," or your exact positioning statements embedded in supposedly editorial content.

Why it's bad: B2B readers have pitch detectors. They've sat through hundreds of vendor presentations. When editorial content starts sounding like a pitch deck, trust evaporates instantly. The content might be factually accurate and well-written on the surface, but the reader feels manipulated, and that feeling overrides everything else.

The fix: The sales deck test. Copy any suspicious paragraph into a sales deck. If it fits without modification, rewrite it. Editorial content should analyze the problem without prescribing your specific solution category. "The gap between AI adoption and AI value is growing" is editorial. "Only a systems-based AI content quality approach can close this gap" is pitch. The difference is whether the reader's next thought is "that's interesting" or "they're selling me something."

Running the Checks: How This Works in Practice

The full check takes me 8-10 minutes per 2,000-word piece. That's not a guess. I've timed it across dozens of articles. For context, that's less time than rewriting a paragraph that a prospect calls out as obviously AI-generated.

I don't run through all 15 patterns manually on every piece. The most common catches (Patterns 1-4, the banned phrases) are automated as search-and-replace in my editing workflow. I search the draft for the literal strings: "worth noting," "represents," "paradox," "revolutionary," "Furthermore," "Moreover." If any of them appear, I delete or rewrite on the spot.

Structural patterns (5-8) require a quick visual scan. Read the piece looking at shape, not content. Are the bullet points all groups of three? Do sections end with platitudes? Are em-dashes everywhere? This takes 2-3 minutes.

Voice violations (9-12) and content sins (13-15) require actual judgment. This is where the human editorial layer earns its keep. No regex catches guru language or pitch-slapping. You have to read with the question: "Would my most skeptical prospect trust this paragraph?"

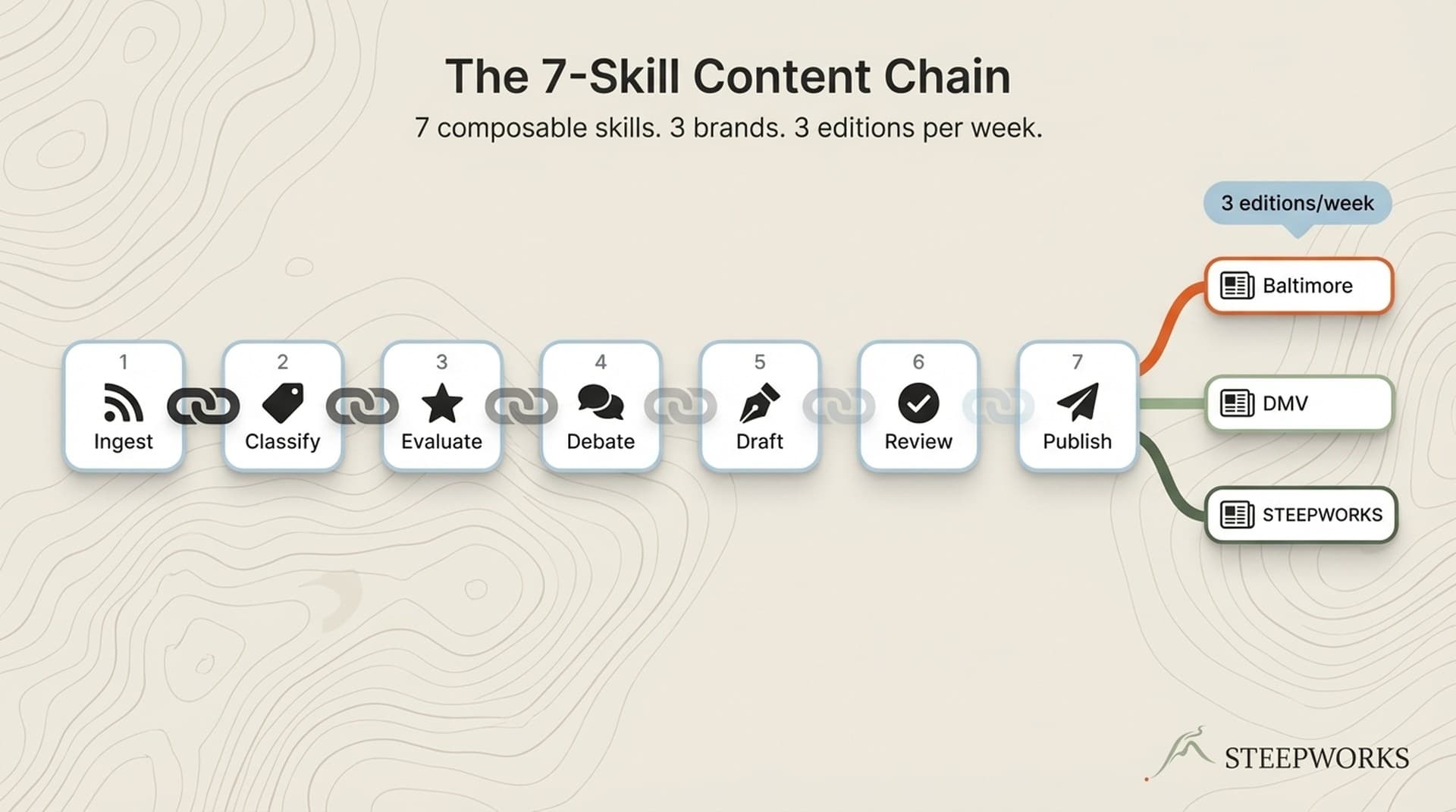

The compound effect is what makes this a system, not just a checklist. Running these checks consistently changes how AI generates content for you over time. When your system instructions include anti-slop rules, the AI's first drafts get cleaner. The checks don't just catch slop. They train the system that produces the drafts. A checklist catches errors. A system reduces them. That's the content production workflow I've been refining for 2.5 years.

Here's the proof that it compounds. When I started running these checks, I was catching 12-15 slop patterns per draft. Six months later, first drafts average 3-4 catches. Not because I lowered my standards. Because the AI's context now includes the anti-slop rules, and the system self-corrects before I even open the editing pass. The time investment in Month 1 pays off every month after.

TechCrunch validated the pattern-recognition approach: "The best guide to spotting AI writing comes from Wikipedia", not from automated detection tools. Anti-slop checks are editorial judgment, not software. I've tested three different AI detection tools. All of them flagged clearly human-written content as AI-generated at least some of the time, and all of them missed actual AI content that was well-edited. Pattern recognition by an experienced editor beats automated detection every time.

The honest caveat: I still miss things. These 15 patterns cover the most frequent and most damaging tells. But AI writing evolves, and so do the tells. The em-dash overuse that was obvious in 2024 is less common in 2026. New patterns emerge. The checklist is a living document, not a permanent solution. I add a new pattern roughly every two months and retire ones that stop being relevant.

AI Content Quality Is the Only Moat Left

When more articles are written by AI than humans, the content itself isn't the differentiator. The editorial layer is. The 15 patterns aren't about writing purity or anti-AI sentiment. They're about building trust in an environment where readers are increasingly skeptical of everything they read.

Here's what I've seen compound over time. An operator who catches slop in Month 1 ships cleaner drafts in Month 3 because the AI learns from the corrections. The editorial standards compound into the system. By Month 6, the AI's first drafts are genuinely better, not because the model improved, but because the context and instructions did.

The Antislop researchers found 90% slop reduction is achievable while maintaining quality. But that research focused on the model layer. For operators, the editorial layer is the lever. You don't need to fine-tune a model. You need to build a content system that catches the 15 patterns and feeds the corrections back into the next draft.

I think about it this way. Every competitor in your space is generating content with the same models you are. The models don't differentiate. The editorial system does. Two companies using the same LLM produce wildly different content quality depending on whether someone is running checks before publish. The 15 patterns are the checks. The workflow that feeds corrections back into drafting instructions is the system. And the system is where the moat actually lives.

I use AI for everything. I also check everything before it ships. Both statements are true, and they're not in conflict. AI content quality isn't about using less AI. It's about building the editorial rigor that makes AI content trustworthy.

Start with these 15 patterns. Add your own when you spot new tells. The checklist becomes a system when the corrections compound.

Victor Sowers builds AI-native GTM systems at STEEPWORKS. 15 years scaling B2B SaaS, two exits, and 2.5 years of production AI-in-GTM.