Should you go AI-native? Honestly what other choice is there?

But also...you can do so much.

I run growth for an AI cybersecurity company, consult with B2B companies on GTM strategy, operate a newsletter business curating family events across two metro areas, run another newsletter on AI for Operators, publish 100+ articles a year on AI-powered knowledge work, have built two spiritual guides written by historical-prophets-turned-ai-agents and more. That's all been me, in a terminal window, talking to AI models that operate inside a repository. It's a crazy pace, but it's not totally unique either.

AI facilitates the rise of the generalist by compressing execution time and condensing skill development. On a personal level it can be a real unlock for your creativity and what you can build because you are far less constrained by whatever you already know how to do. Like, I'm not a developer. I'm a GTM operator who learned enough about systems to be dangerous. But now I can build websites, write database queries, ship production software, create data pipelines, generate crazy images, roll a music video.

It "just" takes AI fluency and the skills to know how to direct it (+grit/tenacity/curiosity because there are bumps).

Since I started writing more about my AI journey I keep getting questions from other operators about how did you get here? So I thought I'd take a stab at answering.

What This Actually Looks Like

I'll start with the end-state system that is my home-base right now because that is what enables a lot of this and because I tell people we can build their version of this together in 2-4 weeks.

The foundation is a single github repository optimized for Claude Code (with ability to be used in Codex/Antigravity) that acts as both a knowledge base and an operating system connected to other tools (e.g. CRM, email, drive etc).

On the raw context side my personal repository has about 3,000 markdown files (different repos for companies have more or less depending on what we are doing). Then of course there's a CLAUDE.md file that teaches the AI how to navigate the repo (think of it as onboarding documentation for your AI agent). Each folder or domain has its own sub-Claude.md to give further instructions. There are 52 custom skills that encode repeatable workflows and chain together. For example research feeds content production, content production feeds publishing, publishing feeds analytics. There are various hooks for memory, chief of staff agent workflows and so on on top that is sort of the last mile. Ultimately the system compounds because every piece of work I do potentially improves the system itself.

How do I then work here? On any given day I have somewhere from 2-6 terminal windows open inside the repository, each running Claude Code against a discrete task/project. One might be executing a multi-phase content production plan. Another is doing prospect research. A third is building a website feature.

Working this way I don't touch UIs anymore for most tools. It's different. It's AI native.

How I got here

My journey started with diving into ChatGPT and learning how to prompt. Then it was building out projects. Then it was custom GPTs — saving system prompts, reusing them. It felt like a big deal at the time. It was also just a faster Google. Not a fundamentally different way of working. This was all focused on my core competencies of scaling GTM. Nothing on the personal side really.

Jordan Crawford's course was the first real level-up. After a month or two here I came across Jordan Crawford's course (can't remember which). The value I got from buying a $1000 course (the only one I've ever forked the cash over for) was subtle but powerful. Watching Jordan's videos (many of which you can see for free) gave me a step-level increase in understanding about building well-crafted deep research prompts and prompting in general. This was the era of prompt engineering. And while context engineering has rightfully supplanted it, the ability to refine plans/prompts remains underrated.

Beyond that skill and some templates to work from, Jordan's comfort in talking to the AI (talking not typing which is an underrated hack for generating good results), iterating, and not taking himself too seriously somehow gave me permission to get out of my own way and play.

Then came literally deploying AI for GTM - clay, research workflows, synthesis, I was like the human tissue stitching together prompt chains and workflows and it was great.

This led to 6 months of building with my former co-founder at GoStudy which exposed me to the edges of what was possible and how to wrap AI inside more deterministic code. Useful. Not vital.

That worked stopped. And then Claude Code dropped.

Claude Code and the terminal chasm was the biggest inflection point. It's both the biggest unlock and to my mind the single requirement to be AI native today (pick Codex, Antigravity over Claude Code if you want, put terminal fluency with AI is what I mean). Basically if you want to be serious today, this is where you need to be.

But the early days, as foggy as they already seem, were daunting. And the most daunting part of it all was literally the first step.

I mean holy shit, just installing Node.js, Python, an IDE, opening a terminal, and running something on the command line to install Claude was SUPER daunting. The literal actual technical implementation of all the things to get Claude Code running on a computer is a huge barrier to someone who has never touched code or a terminal. Then it's basic navigation and fluency — things like literally setting up the IDE appearance and columns for non-coding work.

This is where Jacob Dietle was so kind to set me up. No expectation, no cost. He just sat down with me and walked through everything and got me up and running. He and I have worked together for nine months since, so it turned out to be a good trade-off but that's definitely not why he did it.

Skills, agents, and structured workflows were what made it compound. Once you're in the terminal there's two things that are blockers. The first is terminal/IDE fluency and getting your set-up right (appearance etc.). Then understanding the 5-6 basic patterns and working modes (plan mode, clearing context, reviewing mark-down files etc.). After that it is getting comfortable running multiple terminals at the same time.

The final frontier is personalizing a repository with your context. Who you are, what you want to do (business or personal), what tools you want it to talk to. Setting up a github for version control, and then starting to use skills before ultimately building your own.

You do all this and at this point you're past the current blockers and I would consider you well and truly launched and capable of moving yourself forward on any given project or new technology shift we're going to see. That's because it all compounds and you've crossed the chasm!

The Blank Canvas Problem

Here's what actually stops people.

A blank canvas is tough. When someone who has never touched a terminal opens Claude Code for the first time, it's just a cursor blinking at you. No buttons. No menus. No suggested prompts. Most people I've set up — I'd say 20 out of 30 — hit this wall and stall unless they get 1-2 extra coaching sessions. They can see the potential. They've watched the demos. They believe it works. But they open the terminal, type something vague, get a mediocre response, and close it. There's still just too much that has to happen to cross the chasm.

Two things get people unstuck.

You need a clear idea of what you want to build. Most people come to AI tools with vague intentions — "I want to be more productive" or "I want to use AI for my job." That's not enough. You need a specific project. A specific output. "I want to build a prospect research system that scores accounts against these pain criteria" is something the AI can help you build. "Help me be better at sales" is not.

You need context to make it your own - Usually you need good context to feed the AI. This is who you are (or where you work), and various other data that makes it unique. Then you need a CLAUDE.md file with some tuned instructions for the AI so it knows about the context you have for it.

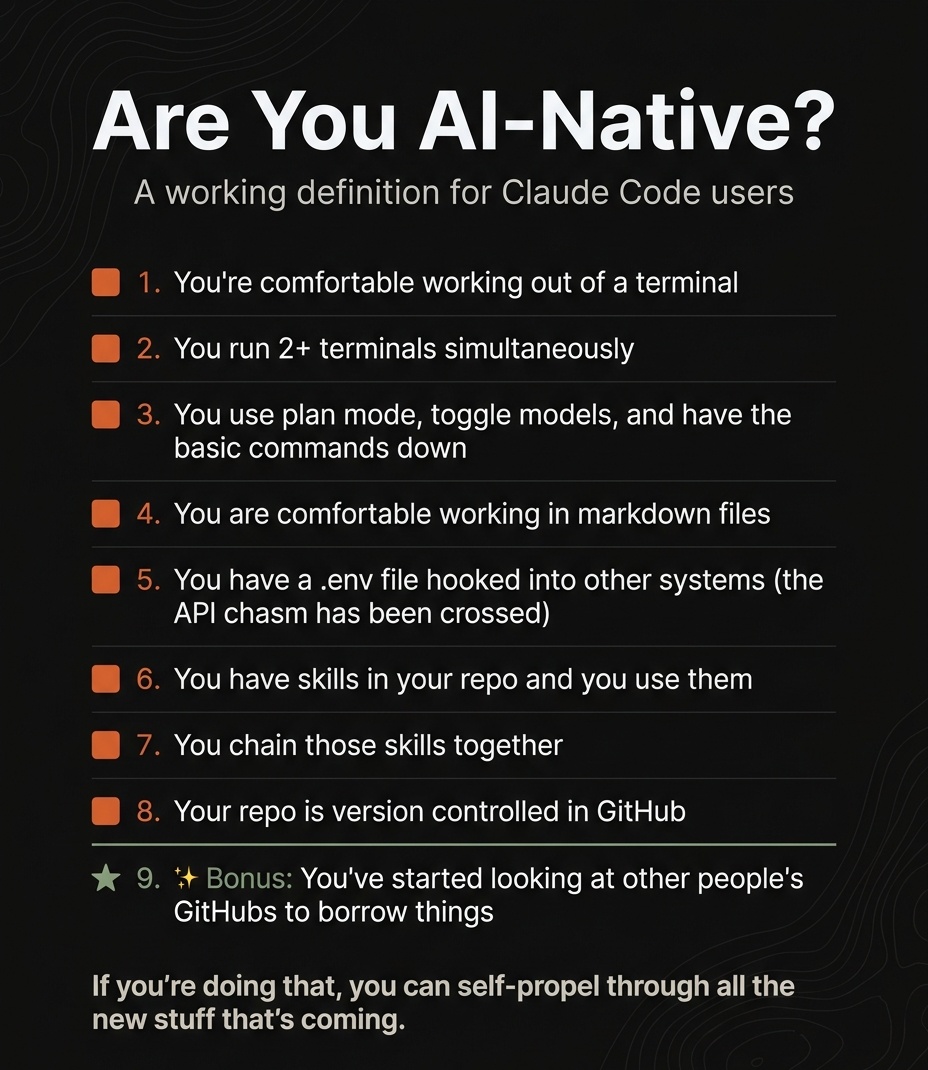

You need to invest the time to cross the chasm. From what I've seen, you know you've crossed it when: you're comfortable running 2+ terminals simultaneously, you use plan mode, you can chain a few skills together, you're comfortable reading, editing, and working out of markdown files as a primary interface, your repo is version controlled. That's the line. Below it, you're experimenting. Above it, you're operating.

For people who aren't there yet and don't want to face the music I do believe we will see the next gen abstract away the terminal UI and learning curve with friendlier surfaces. But we're not there yet. I wouldn't wait. And there's a certain power to getting closer to the actual code. Ultimately the terminal as the UI is still the path to the most leverage.

The Weird Side Effects

Nobody talks about the costs of working this way, so I will close with some brief mentions:

Context-switching is brutal. When you run multiple terminals across different workstreams, you're bouncing between completely different work contexts constantly. The AI doesn't get tired or confused when you come back to it. You do. I'm drafting a separate piece on the ultra-productive but context-switch-heavy modes of working in AI — on closing the loop between idea and execution and not relying as much on other teammates and perspectives and skill sets, for better and worse. That one deserves its own treatment.

The "ugh I need to go into some UI and actually click things" reluctance is real. HubSpot to build a dashboard. Apollo to create a field. Generally avoiding any tool without a good API, CLI, or MCP. Once you've experienced headless work, going back to point-and-click feels like going back to dial-up. That's both a superpower and a dysfunction.

The optimization addiction. It's addictive — and useful — but also addictive again. Optimizing your own repo and setup is truly endless. Every manual process looks like something that should be automated. Every repeated task becomes a skill that should be built. There's a graveyard of side projects that are 80% shipped because I got excited about the next system before finishing the last one. I don't have a clean answer for this one.

Model dependencies are real. When Anthropic has an outage or Claude is slow, my entire workflow stops. I've structured my work around a specific model's capabilities. The need to constantly experiment and iterate on the harness is its own friction — one more thing pulling your attention.

The idea-to-execution gap closes, and that's more disorienting than it sounds. When execution is cheap, every idea becomes possible. Sounds great until you realize the bottleneck was never execution — it was judgment about what to execute. You need much better filters for what's actually worth building. I'm still working on this one.

The Real Answer

Should you go AI-native?

The leverage is real. The creativity and the fun is there.

I just think most people, like me, need a guide to get there. Not forever. Just to build out your first few weeks. It is ungodly helpful to be walked through set-up. To not start with a blank terminal screen. To have someone help architect your first build and what context you might want to ingest and have in your repository. Ultimately the technical setup takes an afternoon. The knowledge architecture takes longer.

Happy to chat more if you're thinking about this...