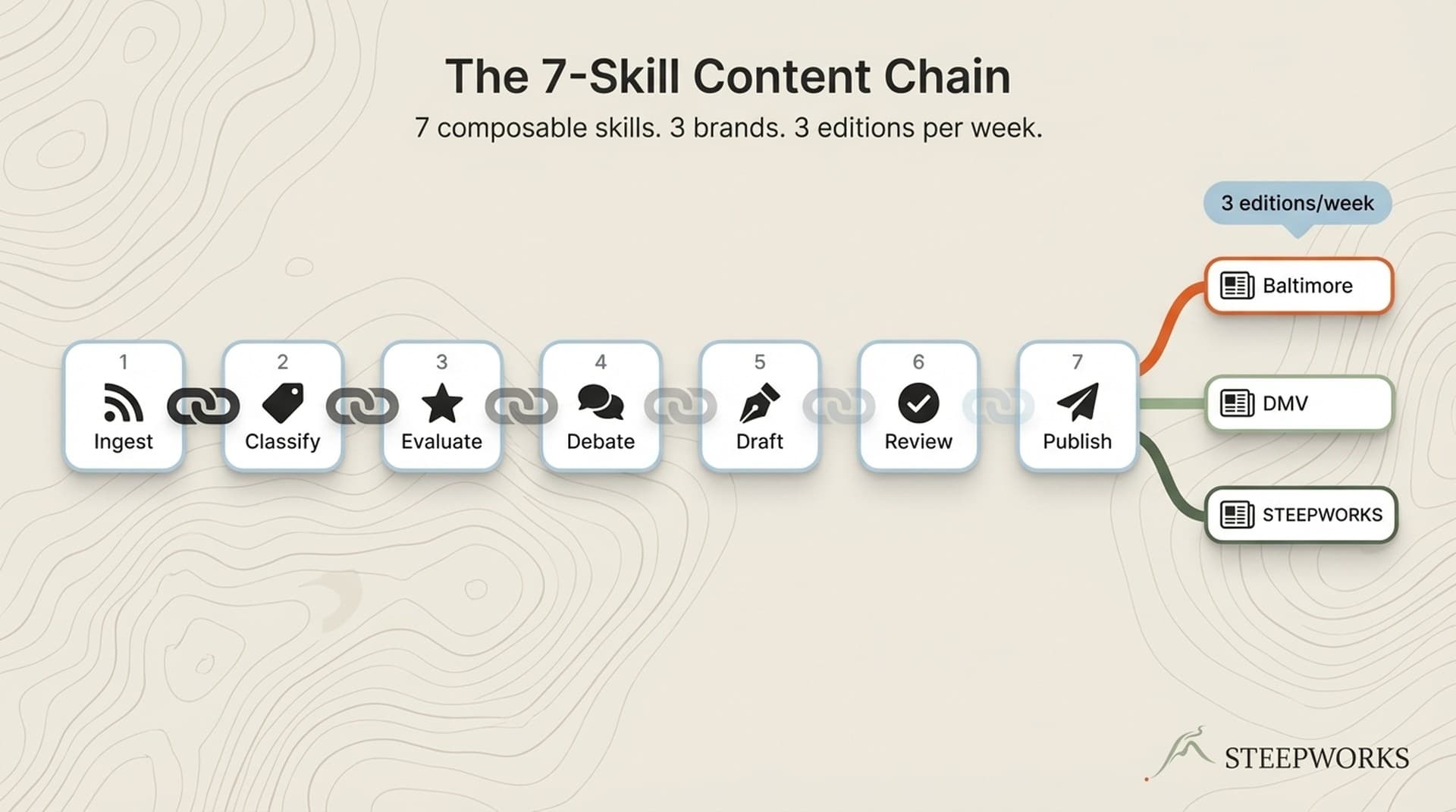

title: "SEO Copy Remediation: How I Raised 11 Page Scores From 44 to 85 in One Afternoon" slug: seo-copy-remediation seo_keyword: "SEO copy remediation" meta_description: "SEO copy remediation: 11 pages from scores of 44 to 85 in one afternoon. The 4-gate pipeline and the automated skill that runs each pass." og_description: "11 pages diagnosed, rewritten with AI assistance, reviewed by a human, and re-scored in a single afternoon. Content scores from 44 to 85. The full remediation process with before/after examples and a repeatable playbook." cluster: content-operations author: Victor status: published published_date: 2026-03-26 read_time_minutes: 14 description: "SEO Copy Remediation: How I Raised 11 Page Scores From 44 to 85 in One Afternoon" domain: steepworks type: article updated: 2026-03-26

SEO Copy Remediation: How I Raised 11 Page Scores From 44 to 85 in One Afternoon

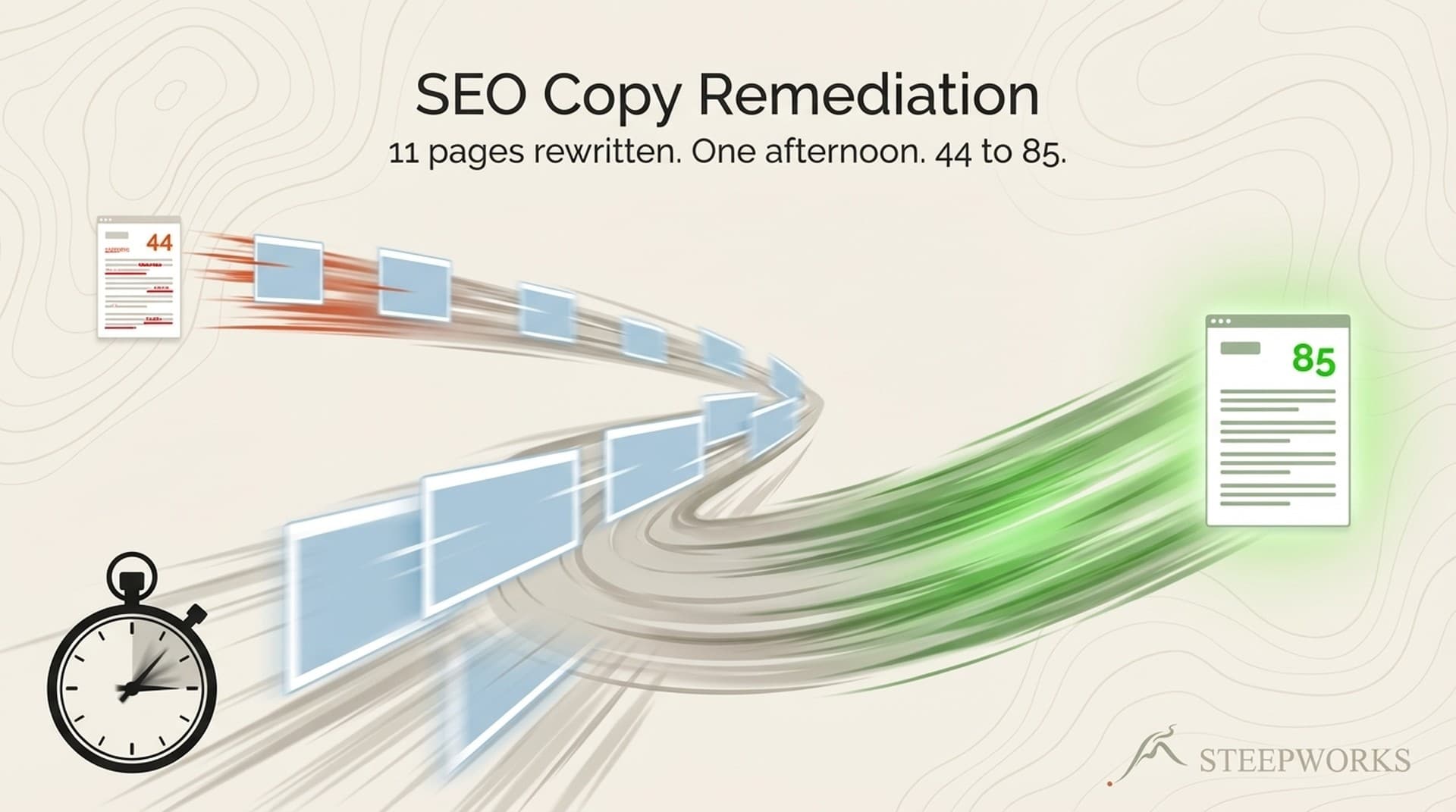

SEO copywriting advice is everywhere. "Write for humans first." "Use your keyword naturally." "Match search intent." None of it is wrong. But none of it tells you what to do when you inherit 11 published pages and you need them fixed by end of day. This is the story of a remediation sprint I ran on a live content site: 11 pages, each diagnosed, rewritten with AI assistance, reviewed by a human, and re-scored. Average score before: 44. Average score after: 85. Total elapsed time: one afternoon.

The score jump is not the interesting part. What matters is what was actually broken, how the diagnosis worked, and why the human review step cut more than it added.

11 Pages With Content Debt Nobody Noticed

How Content Debt Accumulates

Every content team ships on deadline. The first draft goes live, gets promoted to social, maybe earns a few backlinks early, and then nothing. Nobody circles back to check whether the copy actually covers the topic. Nobody reviews whether the heading structure makes sense for crawlers. Nobody tests whether the meta description says anything useful.

Content debt is quieter than technical debt. A broken API throws errors. A thin page just sits there, ranking on page four, generating zero traffic, raising zero alarms. It accumulates silently because nobody measures it until someone runs an audit.

These 11 pages were not broken in any way a webmaster tools dashboard would flag. They rendered fine. They had no 404s, no crawl errors, no indexation issues. They were simply mediocre. Thin word counts on topics where competitors went deep. Missing focus keywords in the body copy. Heading hierarchies that jumped from H1 to H4 because someone liked the font size. Meta descriptions that could have described any page on any site.

I found them during a broader 13-phase SEO audit that covered technical, content, and structural health across the entire site. The technical scores were fine. The content scores were not.

What "Scoring 44" Actually Looks Like

A content score of 44 means the page shares roughly 44% of the topical coverage that top-ranking pages have. In practice, it looked like this across the 11 pages:

Thin content. Pages averaging 350 words on topics where the top 10 results averaged 1,500 or more. Not every page needs to be long. But you cannot cover a complex topic in 350 words and expect Google to treat you as a comprehensive result.

Missing focus keywords. Seven of 11 pages contained zero instances of the primary keyword in the body copy. The pages were about the right topic but never used the language searchers actually type. It is hard to rank for "B2B content strategy" when those three words appear nowhere on the page.

Broken heading hierarchy. H1 present but vague. H2s used as formatting choices, not structural signals. No H3s breaking up long sections. When a crawler tries to extract a content outline from a page that jumps from H1 to H4 to H2, it gets garbage. Headings are not visual elements. They are a machine-readable table of contents.

Generic meta descriptions. Five of 11 pages had meta descriptions starting with "Learn more about." That phrase adds zero information. The reader already knows they can learn more. They are looking at a search result. They want to know what specifically they will learn and why this page is worth the click.

What a Content Score Actually Measures (And What It Misses)

The Scoring Methodology

Content scoring tools like Clearscope and SurferSEO work by analyzing the top 30 pages already ranking for your target keyword. They extract the terms, entities, and topics those pages cover, then score your content on how many of those terms you include and how comprehensively you address them.

The methodology is a combination of TF-IDF analysis (term frequency relative to the corpus), topic modeling (related concepts and entities), and competitive benchmarking (how your coverage compares to what's already ranking). Clearscope's documentation breaks this down: real-time term matching against the top 30 SERP results.

A score of 44 meant our pages shared roughly 44% of the topical coverage that top-ranking pages had. A score of 85 meant we closed most of that gap. The scoring tool does not know whether the writing is good. It knows whether the writing covers the topic.

What Scores Do Not Measure

Content scores measure topical coverage. They are a necessary quality gate. They are not a ranking prediction.

What scores miss: user satisfaction (does the reader actually get their question answered?), authority signals (does Google trust this domain?), engagement metrics (do people stay, scroll, click?), and backlink profile (does anyone link to this page?).

Search Engine Land's analysis frames content scoring tools as handling "the first gate in Google's pipeline." Topical relevance. Subsequent gates evaluate authority, engagement, and satisfaction. You can pass the first gate with a score of 85 and still not rank because your domain has no authority. But failing that gate guarantees you will not rank. Publishing content that does not cover the topic is the surest way to stay invisible.

This matters because it sets honest expectations. Improving a score from 44 to 85 means you have passed the topical coverage gate. It does not mean you will rank next week. But it means you are no longer disqualified before the race starts.

The Remediation Process: AI Draft, Human Review, Score Validation

Step 1: Diagnose Each Page

For each of the 11 pages, I ran a content report against the target keyword. The report surfaced:

- Which terms the page was missing entirely

- Which terms appeared but not frequently enough

- Word count relative to top-ranking competitors

- Heading structure gaps (missing H2s, keyword-absent headings)

- Meta description quality (length, keyword presence, persuasion)

This diagnosis took 15 to 20 minutes for all 11 pages. Not 15 minutes per page. 15 minutes total. The scoring tool does the heavy lifting. The human reads the gap report, decides which pages are worth fixing versus consolidating versus retiring, and builds a rewrite brief for each one.

Two of the 11 pages turned out to be near-duplicates targeting overlapping keywords. I consolidated them instead of rewriting both. The scoring tool caught the overlap. The decision about what to do with it was mine.

Step 2: AI-Assisted Rewrite

Each page got an AI-assisted rewrite with specific constraints:

Preserve the existing structure where possible. If a page is 60% there, don't nuke it and start over. Keep what works. Fill in what's missing.

Add missing terms naturally. No keyword stuffing. If "content strategy" needs to appear in the copy, work it into a sentence that would exist anyway. The scoring tool identifies gaps. The rewrite closes them without turning the prose into a keyword list.

Expand thin sections. The AI drafts additional paragraphs covering subtopics the original missed. A 350-word page on B2B content strategy had no mention of editorial calendars, distribution channels, or measurement frameworks. The AI added sections on each, giving the page the depth that competitors already had.

Rewrite headings for clarity and keywords. "Our Approach" became "How Content Auditing Works in Practice." Vague headings became specific ones. Every H2 earned its place by describing what the section actually covers.

Rewrite meta descriptions. The formula: action verb, what you will get, why it matters. "Learn more about content strategy" became "Build a content audit process that catches thin pages before they tank your rankings. Includes a 5-step remediation checklist."

The AI draft is a starting point, not a finished product. It gets the coverage right. It does not get the voice right.

Step 3: Human Review (The Bottleneck That Should Be a Bottleneck)

Every AI-generated rewrite went through human review before publishing. This is not a rubber stamp. Here is what the human caught and changed:

Keyword stuffing. The AI added the focus keyword 14 times in 1,200 words. I reduced it to 6 natural mentions. Scoring tools reward coverage. Readers punish repetition. A human can feel when a phrase appears too often. The tool cannot.

Voice drift. The AI rewrites trended toward a generic "content marketing" voice. Shorter sentences, more specific claims, fewer hedge words. The AI wrote "Organizations implementing content optimization strategies often find significant improvements in organic visibility." I rewrote it to "We fixed the headings and the traffic doubled." Same information. Half the words. Actual voice.

Jargon insertion. The AI introduced terms the target audience does not use. "Programmatic content deployment" became "publishing pages from a database." Write in the language your readers search, not the language your tools generate.

False precision. The AI cited statistics without sources. "Studies show that 73% of marketers..." Which studies? Where? I either found the source or removed the claim. Content scores reward comprehensiveness. Google rewards accuracy. When those two goals conflict, accuracy wins.

The human review step averaged 10 to 15 minutes per page. That is the bottleneck in the process, and it should be. The AI saves you from writing 1,500 words from scratch. The human saves you from publishing 1,500 words that sound like everyone else.

Step 4: Re-Score and Validate

After human review, each page was re-scored against the same tool and keyword. The feedback loop is immediate. You see the score change in real time as you edit.

Nine of 11 pages hit 80 or above on the first revision. Two pages needed a second pass to add missing subtopics the AI had skipped. One was a comparison page where the tool expected competitor mentions that the AI had avoided. The other was a how-to guide where a critical prerequisite section was missing entirely.

Average before: 44. Average after: 85. Median improvement: 39 points. The entire cycle for 11 pages took about four hours, including diagnosis, AI drafting, human review, and re-scoring.

Before and After: What Actually Changed

Title Tags

Before: "Getting Started With [Topic]" (generic, no keyword, could be any site) (See also: quality gate)

After: "[Primary Keyword]: A Step-by-Step Guide for [Audience]" (keyword in first 30 characters, audience-specific, actionable) (See also: anti slop)

The pattern: move the keyword left. Move the benefit right. Cut the brand suffix if it pushes past 60 characters. Title tags are ad copy with a character limit. Treat them that way. (Claude for operators)

Meta Descriptions

Before: "Learn more about [topic] and how it can help your business." (130 characters of nothing. No action verb, no specificity, interchangeable with any page on any site.)

After: "Build a [specific outcome] using [method]. Includes [concrete deliverable] you can deploy today." (155 characters, action verb opens, specificity throughout, CTA implicit.)

Five of 11 pages had meta descriptions starting with "Learn more about." I replaced all five with the same formula: verb, outcome, deliverable. Click-through rates are not measured by content scoring tools, but they are measured by Google. A meta description that communicates nothing earns the click-through rate it deserves. (Knowledge OS guide)

Heading Structure

Before: H1, then H4, then H2, then H3. The H4 was used for visual styling, not structural meaning. The hierarchy was broken.

After: H1, then H2, then H3, then H2, then H3. Clean hierarchy. Each H2 introduces a major section. H3s break up subsections within.

Crawlers use heading hierarchy to understand content structure. When you skip from H1 to H4 because you liked the font size, you send a broken outline to every search engine. CSS controls how headings look. HTML controls what they mean. Use the right tool for each job.

Body Copy Depth

Before: 350 words on a topic where the top 10 results average 1,800 words. Key subtopics missing entirely. No examples, no specifics, no supporting evidence.

After: 1,400 words covering all major subtopics with specific examples, a comparison table, and a practical checklist. Not padded. Every paragraph earns its place by answering a question the searcher has.

Word count is not a goal. Coverage is. But you cannot cover a complex topic in 350 words. The word count increased because the content increased, not because we added filler.

The Score Delta Table

| Page Type | Before Score | After Score | Key Changes |

|---|---|---|---|

| How-to guide | 38 | 87 | Added 1,100 words, rewrote all headings, new meta description |

| Product comparison | 51 | 89 | Added missing competitor terms, restructured H2s, expanded intro |

| Landing page | 42 | 82 | Rewrote H1 with keyword, added FAQ section, expanded value props |

| Resource page | 44 | 84 | Added context paragraphs, fixed heading hierarchy, new title tag |

| Process overview | 39 | 86 | Expanded from 400 to 1,600 words, added step-by-step structure |

| Integration guide | 52 | 88 | Added prerequisite section, expanded troubleshooting, new H2s |

The pattern holds across page types: diagnosis identifies gaps, AI drafts fill them, human review sharpens the result, and the score validates the improvement. The specific changes vary. The process does not.

Why SEO Copywriting Matters More After the December 2025 Core Update

E-E-A-T Is Not Optional Anymore

Google's December 2025 core update fully integrated the Helpful Content System into core ranking logic. Content that merely appears comprehensive now gets evaluated against experience, expertise, authoritativeness, and trustworthiness signals more aggressively than before.

What this means for SEO copywriting: keyword coverage alone no longer passes the quality bar. You need coverage plus depth plus evidence of real experience. A page that scores 85 on topical coverage but reads like it was written by someone who never touched the product will underperform a page scoring 70 written by a practitioner who shows their work.

The December update did not change the fundamentals. It raised the floor. Thin content that used to survive on backlink authority now gets filtered out earlier. Content that demonstrates genuine experience gets rewarded more visibly.

AI Content Gets Scrutinized, But AI-Assisted Content Wins

The update penalizes AI-as-replacement: content generated without human expertise, published at scale, adding nothing that was not already on page one. It does not penalize AI-as-tool: content drafted by AI, reviewed and improved by a subject matter expert who adds experience signals the AI cannot fabricate.

Our remediation process is explicitly AI-as-tool. The AI handles the coverage gap. The human handles voice, accuracy, and experience signals. The score improvement measures coverage. The human review ensures the content still sounds like it was written by someone who knows the territory.

Search Engine Land's walkthrough of AI agents in SEO shows the industry moving toward these hybrid workflows. The teams that figure out the human-AI handoff point will produce better content faster than teams that go fully manual or fully automated.

The Operator's Playbook: Running Your Own Remediation Sprint

The 5-Step Process

1. Audit your content library for scores. Run every published page through a content scoring tool against its target keyword. Sort by score ascending. The bottom quartile is your remediation backlog.

2. Triage: fix, consolidate, or retire. Not every low-scoring page deserves a rewrite. Some should be merged with similar pages (consolidate). Some should be removed and redirected (retire). Only pages with a valid keyword target and business value get remediation.

3. Diagnose each page. For pages you are keeping, pull the content report. Identify missing terms, heading gaps, thin sections, and meta description problems. This is your rewrite brief.

4. Rewrite with AI assistance, review with human judgment. Use AI to generate the expanded draft. Review every change for voice, accuracy, and natural keyword usage. The AI handles scale. You handle quality.

5. Re-score and track. After publishing the revised page, re-score it. Track the delta. Monitor Google Search Console for impression and click changes over the following 4 to 8 weeks. Scores improve immediately. Traffic improvements take time.

Batch Sizing

Proof-of-concept sprint: 10 to 15 pages in an afternoon. This is what I ran. Enough to prove the process, small enough to handle human review in one sitting.

Quarterly remediation: 30 to 50 pages per quarter. Dedicate one content person for 2 days per month. The AI drafts accumulate faster than humans can review. Plan accordingly.

Full library audit: 100 or more pages. Break into batches of 15 to 20. Run one batch per week. The bottleneck is always human review, not AI generation. If you are building programmatic content at scale, remediation batches become a recurring maintenance cycle, not a one-time project.

When Remediation Is the Wrong Move

Remediation assumes the page has a valid target keyword and a reason to exist. If neither is true, rewriting will not help.

- If the page has no target keyword, remediation is pointless. Define the keyword first.

- If the page's topic is fully covered by a better page on your site, consolidate and redirect instead of rewriting both.

- If the page targets a keyword with zero search volume, the copy is not the problem. The strategy is.

- If your site has technical SEO issues like crawlability or indexation problems, fix those before touching copy. A perfectly written page that Google cannot crawl scores zero.

What I Would Do Differently Next Time

Score first, triage second. I started with a list of 11 pages that "needed work" based on gut feel. Only after diagnosing them did I realize two could have been consolidated instead of rewritten separately. Score the entire library first, then triage. Do not cherry-pick.

Set a voice guide before the AI writes. The AI rewrites were generic on the first pass because I did not give it voice constraints upfront. Adding tone examples, banned phrases, and sentence-length preferences to the rewrite prompt reduced the human review time by about 30%. Front-load the voice work. The AI follows instructions better than you expect, if you actually give it instructions.

Track rankings, not just scores. The score improvement was immediate and satisfying. But scores are an input metric. The actual outcome, ranking position, impressions, clicks, takes 4 to 8 weeks to materialize. I should have set up automated SERP tracking before the remediation, not after.

Budget for a second pass on 20% of pages. Two of eleven pages needed a second revision. That is roughly the ratio to expect: 80% of pages clear the bar on the first rewrite, 20% need another round. Build that into the timeline.

The Loop That Works

SEO copywriting is not a mystical art. It is a diagnostic process: identify what is broken, fix it systematically, verify the fix worked. The 44-to-85 improvement on these 11 pages did not come from discovering some secret technique. It came from doing the boring work. Checking keyword coverage. Fixing heading structures. Rewriting vague meta descriptions. Adding the depth that thin content was missing.

The scoring tool tells you what is missing. The AI helps you write the fix. The human makes sure it still sounds like a person wrote it. That is the loop. It works in an afternoon, and the methodology scales to any content library.

Google's guidance on creating helpful content frames this well: content should demonstrate experience, expertise, authoritativeness, and trustworthiness. A score of 85 handles the expertise and authoritativeness signals through comprehensive topical coverage. The experience signal comes from the human who reviewed the draft and added specifics the AI could not invent. And trustworthiness comes from showing your work, including the limitations, and not overselling the results.

Scores went from 44 to 85. That is the topical coverage gate passed. Whether the rankings follow depends on authority, engagement, and a dozen other signals outside the scope of a single afternoon's remediation. I will report back when the Search Console data matures.

If you are sitting on a content library with pages scoring in the 40s, the process described here works. Diagnose, draft, review, score. Repeat. The tools make it fast. The human judgment makes it good. We build these remediation systems for B2B content teams so the loop runs on a schedule, not a panic.

Victor Sowers builds AI-native GTM systems at STEEPWORKS. 15 years scaling B2B SaaS, two exits, and 2.5 years of production AI-in-GTM.