title: "Content Quality Gates: Why AI Content Review Catches What Grammarly Misses" slug: content-quality-gates seo_keyword: "AI content review" meta_description: "AI content review beyond spell-check: 5 quality gates catch voice drift, slop patterns, and positioning gaps. From 500+ content runs in production." og_description: "58% of teams see quality degrade past 100 AI pieces/month. Grammar tools can't fix that. Here's the production quality gate pipeline I built -- three layers, real failures, and how to start with one gate today." cluster: content-operations author: Victor status: published published_date: 2026-03-26 read_time_minutes: 12 description: "Content Quality Gates: Why AI Content Review Catches What Grammarly Misses" domain: steepworks type: article updated: 2026-03-26

Content Quality Gates: Why AI Content Review Catches What Grammarly Misses

Grammarly Gave It a 98. The Blog Post Still Tanked.

The post scored 98 in Grammarly. Zero errors, "clear" readability, "confident" tone. We published it. Within a week, two things happened: our head of sales flagged that the competitive positioning sounded nothing like our brand voice -- it had drifted into generic SaaS speak. And a prospect emailed to ask where we'd gotten a market-size stat. We hadn't gotten it anywhere. The AI had fabricated it, and it sounded plausible enough that Grammarly, our editor, and I all missed it.

That post sat on the blog for six days before anyone caught the problems. The grammar was perfect. The content was broken.

This wasn't a one-off. When I audited 20 articles we'd published with AI assistance over the prior quarter, I found that 11 had at least one issue that no grammar tool would catch: voice inconsistencies between sections, statistics that traced back to nowhere, introductions that promised things the body never delivered, and conclusions pitched at the wrong seniority level. Every single piece had scored above 90 in Grammarly. Every single piece had passed our manual editing process.

The problem wasn't grammar. Most content failures in AI-assisted production aren't grammar failures. They're voice drift, unsupported claims, structural incoherence, and audience mismatch. These are semantic and strategic problems. Grammarly doesn't have the architecture to evaluate them, because evaluating them requires access to your brand voice document, your buyer persona, your competitive positioning, and the source data behind every claim. Grammar tools don't have any of that context.

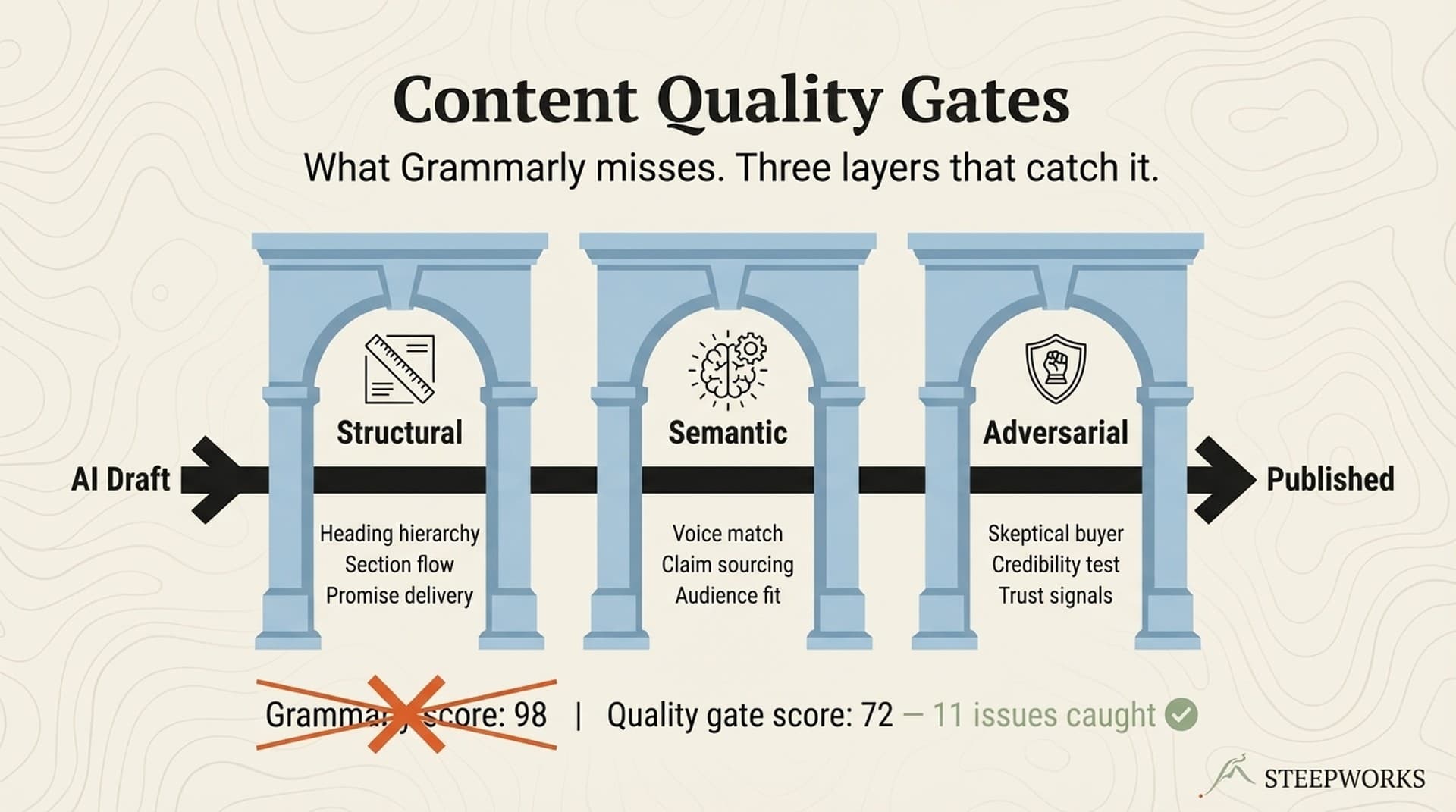

I built a multi-gate AI content review pipeline because I needed to catch what Grammarly cannot. Three layers -- structural, semantic, adversarial -- each evaluating a different dimension of content quality. This is the buildlog.

If you're a content leader shipping 8+ pieces a month with AI assistance, and quality feels inconsistent but you can't quite pinpoint why -- you probably have Grammarly, maybe a style guide, and something is still slipping through. This article is the diagnostic framework I wish I'd had before those 11 broken articles went live.

What Grammar Tools Actually Check -- And Where They Stop

You already know what Grammarly does. The useful question is where it stops. Here's the boundary:

| Grammar Tools Check | Quality Gates Check |

|---|---|

| Spelling, punctuation, syntax | Voice consistency against brand standards |

| Readability score | Claim verification (is this stat real?) |

| Passive voice detection | Structural coherence (does the argument build?) |

| Tone detection (one dimension: formality) | Audience alignment (would the ICP care?) |

| Plagiarism/AI detection | Competitive positioning accuracy |

| Word choice suggestions | Internal consistency (does H2 deliver what H1 promised?) |

The architectural gap is straightforward. Grammarly operates on surface text. It evaluates each sentence against generic grammar rules. It has no access to your brand voice document, your ICP definition, your competitive positioning, or the source data that informed the content. It can't ask "would the buyer believe this?" because it doesn't know who the buyer is. Grammarly's own feature page confirms its scope: grammar, clarity, tone, and plagiarism.

Grammarly Business added brand tone profiles, but practitioners report that tone suggestions overcorrect, flatten content into generic patterns, and miss nuance. One dimension of tone (formality) is not voice consistency. Voice is the aggregate of word choice distribution, sentence structure tendencies, first-person vs. third-person ratio, the specific way you handle uncertainty, and how you open a section. Formality is a single slider. Voice is a fingerprint.

Here's the stat that frames the rest of this article: 58% of organizations struggle with quality degradation when scaling AI content past 100 pieces per month. Grammar tools don't degrade at scale. Content quality does, because the problems are upstream of grammar. When you go from 10 to 100 AI-assisted articles per month, the grammar stays clean. The voice drifts, the claims multiply, the structural coherence frays, and the audience targeting blurs. Those are the failure modes that matter at scale, and they need a different class of evaluation tool.

Three Content Failures That Pass Every Grammar Check

These are from production. Not hypotheticals.

Failure 1: Voice Drift

We had a 6-article series on GTM strategy. Articles 1 through 3 were written in the same session with strong voice context. Articles 4 through 6 were written two weeks later, different session, same prompts. By article 6, the voice had shifted from "reflective operator" to "thought leadership consultant." Same author, same prompt library, same Grammarly score. But the voice had drifted: longer sentences, more passive constructions, "organizations should consider" replacing "here's what I learned." A reader would feel the inconsistency without being able to name it.

Grammar tools miss voice drift because it's semantic, not syntactic. Each sentence is grammatically correct. The drift lives in aggregate patterns -- word choice distribution shifting toward corporate defaults, sentence structure trending toward passive complexity, first-person conviction replaced by third-person abstraction. No individual sentence triggers a grammar flag. The pattern only emerges when you compare the draft against a voice reference document, sentence by sentence.

Failure 2: Fabricated Claims

An AI-assisted competitive analysis included the stat "companies using intent data see 40% higher conversion rates." It sounded right. Grammarly flagged nothing. Our editor didn't catch it. I didn't catch it on first read. The problem: that number doesn't exist in any published research. The AI had synthesized a plausible-sounding figure from adjacent data points. It wasn't a lie. It was a hallucination that read like a fact.

Grammar tools miss fabricated claims because Grammarly checks if a sentence is well-written, not if it's true. Claim verification requires cross-referencing against source data, which is an entirely different evaluation architecture. The sentence "companies using intent data see 40% higher conversion rates" is grammatically perfect, clearly written, and completely made up. A grammar tool has no way to distinguish it from a sentence backed by three peer-reviewed studies.

Failure 3: Audience Mismatch

A blog post targeting VP-level buyers opened with a tutorial-style explanation of what CRM stands for. The content was accurate, well-written, and grammatically flawless. But it was pitched at a junior SDR, not a VP with 15 years of experience. The VP would have bounced in 10 seconds -- not because the content was bad, but because it wasn't for them.

Grammar tools miss audience mismatch because audience alignment requires knowing the ICP. Grammarly doesn't have access to your buyer persona, their seniority level, or what they already know. It can't distinguish content written for a VP from content written for an intern. Both can score 98.

These three failure types share a root cause: they require context that grammar tools don't have. Voice drift requires a brand voice reference. Claim verification requires source data. Audience alignment requires a buyer persona. Quality gates provide that context. Grammar tools can't.

The Multi-Gate Pipeline: Structural, Semantic, Adversarial

Three sequential gates, each with a specific evaluation mandate. Content must pass all three before publication. Each gate can flag, revise, or block.

Gate 1: Structural Review

The structural gate asks whether the piece holds together. Does the introduction promise what the body delivers? Do the H2s follow a logical progression? Is the conclusion earned by the evidence presented?

It catches sections that don't connect, introductions that promise five things when the body covers three, and conclusions that introduce new claims nobody supported. Structural drift happens when AI generates sections independently, each coherent in isolation but disconnected as a sequence.

The method is mechanical: compare the outline contract against the draft, check H2-to-H2 transitions, verify that the introduction's promises are fulfilled by the body. This gate is the fastest. It's pattern matching against the article's own structure.

Gate 2: Semantic Review

The semantic gate checks voice consistency against brand standards, claim verification, and terminology consistency. Every stat, every "research shows," every competitive claim gets traced to a source. If you call it "revenue engine" in section two and "sales pipeline" in section four, this gate catches it.

It flags voice drift (Failure 1 above), fabricated or unverifiable claims (Failure 2), jargon inconsistency, tone shifts between sections, and brand lexicon violations where the vocabulary slides from your chosen terms into generic defaults.

The method is reference-heavy: score voice against your standards documents, flag every claim for source verification, run terminology consistency checks across the full piece. This gate loads the brand voice standards, the approved terminology list, and the claim sources. Then it reads the draft against all three. Does this draft sound like us, say things we can prove, and use the words we've chosen?

Gate 3: Adversarial Review

The adversarial gate asks one question: would the target buyer believe this? Does any section trigger skepticism? Are there claims that sound like marketing instead of operator experience? Would a VP Marketing at a Series B SaaS company share this or dismiss it?

It catches audience mismatch (Failure 3), over-promising, generic advice disguised as insight, claims that don't survive a "prove it" challenge, and content that reads as vendor pitch instead of practitioner buildlog.

The method is persona-based: adopt the buyer persona, read every section as a skeptic, flag anything that triggers "I don't believe you" or "so what?" This is the most judgment-heavy gate, and the one that catches the most important problems. A piece can be structurally sound, semantically consistent, and still fail because it talks to the wrong person or makes claims that a buyer would dismiss. (Claude for operators)

This adversarial layer follows the same upstream/downstream separation principle that governs all our agent architectures. The content creator and the content reviewer must be architecturally separate. When the same agent writes and evaluates, it defends its own choices instead of challenging them. Splitting creation from evaluation isn't overhead. It's structural integrity.

Operational Realism

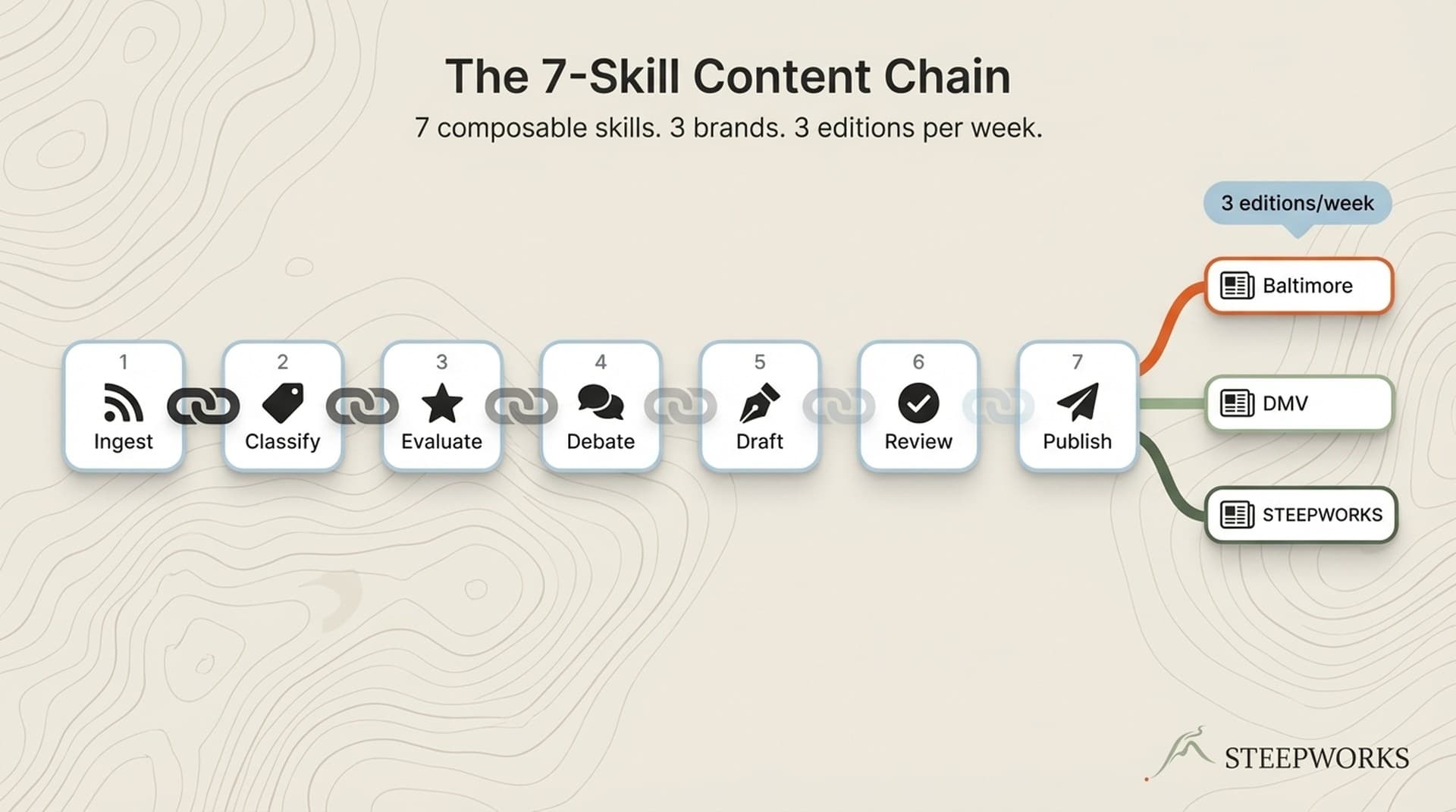

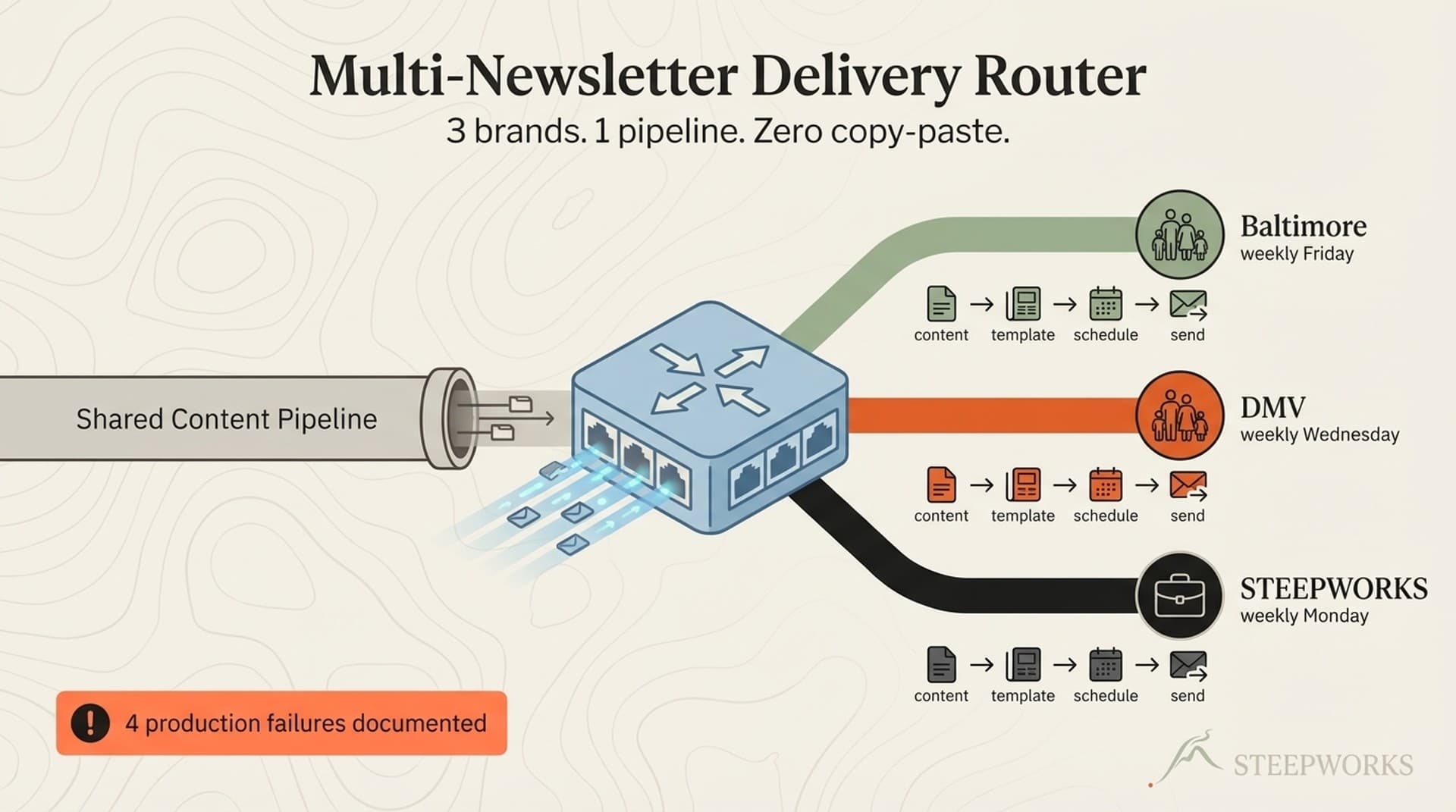

Time cost: The full 3-gate pipeline adds 3 to 5 minutes per article when automated. Structural and semantic gates run in under 90 seconds each. The adversarial gate takes 1 to 2 minutes because it generates a full critique with specific flags and suggested rewrites. (See also: content review agent)

Who runs it: Automated. Triggered as a skill after the draft is complete. No additional headcount. The reviewer is an AI agent with a different evaluation mandate than the writer. The writer's job is to produce. The reviewer's job is to challenge. (See also: anti slop)

False positives: Expect 1 to 2 flags per article that you'll override. The adversarial gate is intentionally aggressive -- it's easier to dismiss a false flag than to catch a real problem post-publication. Tuning the buyer persona reduces noise over time. After running the gate on 10 to 15 articles, you'll know which flags to trust and which reflect the persona being overly cautious.

Before and After: What the Adversarial Gate Actually Catches

Theory is fine. Here's what the gate does in practice. (Knowledge OS guide)

Example 1: Competitive Positioning Claim

Before (passed Grammarly, passed structural gate, passed semantic gate):

"Our approach delivers 3x faster implementation than traditional consulting engagements, with measurable ROI within 30 days."

Adversarial gate flag:

"As a VP Marketing evaluating this: '3x faster' compared to what baseline? Which traditional consulting engagements? 'Measurable ROI within 30 days' -- measured how? This reads like a vendor claim, not an operator observation. A skeptical buyer would ask for the comparison methodology and the measurement framework. Without those, this damages credibility instead of building it."

After (revised):

"The last three engagements moved from kickoff to first deliverable in under two weeks -- compared to the 6-to-8-week ramp-up I've seen in traditional consulting. The difference isn't speed for its own sake. It's that the system front-loads context gathering, so week one is productive instead of orientation."

The vague multiplier became a specific observation. "Measurable ROI" became a concrete timeline and mechanism. Third-person vendor speak became first-person operator experience. The claim is smaller but believable.

Example 2: Audience Mismatch

Before:

"To get started with AI content review, first ensure your team understands the basics of prompt engineering and has access to an LLM provider."

Adversarial gate flag:

"Your target reader is a VP Marketing who ships 10+ pieces per month with AI. They already have an LLM provider. This opening assumes a beginner. The VP skips this paragraph -- or worse, decides the article isn't for them."

After:

"You're already using AI to draft content. The question isn't whether to use it -- it's whether your review process has kept pace with your production process."

Beginner-level setup instructions became an assumption of competence. The reader feels recognized, not talked down to.

The adversarial gate doesn't improve writing quality. It improves content credibility. Those are different problems that require different evaluation architectures. A grammar tool improves sentences. A structural gate improves coherence. A semantic gate improves accuracy. An adversarial gate improves trust. You need all four layers because content fails in all four dimensions.

Building Your Own AI Content Review Pipeline (Start With One Gate)

You don't need three gates on day one. Start with one that catches your most frequent failure mode, prove it works, then add layers.

Week 1: The Minimum Viable Quality Gate

Pick one gate. For most teams, start with the semantic gate -- specifically voice consistency.

- Create a 1-page voice reference document: 5 "do" examples, 5 "don't" examples, pulled from your best-performing content. Not aspirational standards. Actual sentences from pieces that worked.

- After every draft, run a review pass: "Compare this draft against [voice reference]. Flag any sentence that violates the voice standards. Explain why."

- Time cost: 60 seconds per article. Setup: 30 minutes once.

That's it. One document, one review prompt, one minute per article. If you catch even one voice drift per week, the gate has paid for itself in editorial time saved.

Weeks 2-3: Add the Structural Gate

After drafting, run a structural check: "Does the introduction promise what the body delivers? Do the H2s follow a logical progression? Does the conclusion introduce new claims that weren't supported in the body?"

This catches the most common AI content failure: structural drift, where sections are generated independently and don't build on each other. The introduction writes checks the body can't cash. The conclusion wanders into territory nobody prepared the reader for. These problems are invisible in section-level editing and only emerge when you evaluate the piece as a whole.

Month 2: Add the Adversarial Gate

Define your buyer persona: title, company size, experience level, top 3 objections.

After structural and semantic gates pass, run the adversarial review: "Read this as [buyer persona]. Flag anything that triggers skepticism, feels generic, or assumes the wrong audience."

This gate has the highest false positive rate initially. That's by design. Tune the persona based on which flags you override. After 10 articles, you'll know exactly how aggressive to calibrate the skeptic. WorkfxAI's comparison of AI vs. human voice consistency provides useful benchmarks for calibrating your gates.

The Compound Effect

Organizations using structured quality gates report 45% fewer post-publication content issues compared to ad-hoc review. But the real value isn't fewer fixes. It's pattern data.

After running gates on 20 articles, you'll know exactly where your content production breaks. Maybe your AI drafts consistently fabricate stats in competitive sections. Maybe voice drifts specifically in conclusions. Maybe the structural gate catches introduction over-promising every third article. That pattern data tells you where to invest in upstream fixes -- better prompts, better source documents, better outlines -- instead of catching the same problems downstream forever.

The same architectural pattern -- sequential evaluation layers with distinct mandates -- is how we evaluate 120 newsletter articles weekly with 7 agents. Quality gates for articles and quality gates for curation are the same design principle applied at different scales. The principle is: separate evaluation from production, give each evaluator a specific mandate, and let them disagree. The disagreements are where the real quality problems surface.

Optimizely's guide to AI content consistency covers additional dos and don'ts for using AI in consistency enforcement, and it's a solid complement to the gate architecture described here.

Grammar Is the Floor, Not the Ceiling

Grammarly solves grammar. Quality gates solve content. They're complementary -- you need both. But grammar is the floor. Voice consistency, claim verification, structural coherence, and audience alignment are the ceiling. Most teams have the floor covered and the ceiling wide open.

Every piece your team publishes already passes grammar review, whether automated or manual. The question is whether it also passes voice review, claim review, structural review, and audience review. If you're scaling AI-assisted content production past a handful of articles per month, the answer is probably no. And that's where the quality starts to erode -- not in the grammar, but in the dimensions that grammar tools were never built to evaluate.

One gate. One voice reference document. Sixty seconds per article. Start there. Build toward three gates when the first one proves its value. The architecture scales; the starting point doesn't need to.

The content that damages your brand is rarely the content with typos. It's the content that sounds like everyone else, claims things it can't prove, and talks to the wrong person. Grammar tools will never catch that. Quality gates will.

Victor Sowers builds AI-native GTM systems at STEEPWORKS. 15 years scaling B2B SaaS, two exits, and 2.5 years of production AI-in-GTM.