title: "Cold Outbound: 6 Emails, 3 Frameworks, the One That Got 34% Reply Rate" slug: cold-outbound-frameworks seo_keyword: "AI cold email" meta_description: "AI cold email frameworks: 6 emails, 3 methodologies, one 34% reply rate. PVP methodology, hypothesis-driven sequences, and the AI pipeline behind them." og_description: "6 cold email variations. 3 frameworks. Same ICP, same infrastructure, same time window. PVP-B hit 34% reply rate -- 10x the industry average. The difference wasn't the framework. It was the specificity the framework forced." cluster: ai-for-gtm author: Victor status: published published_date: 2026-03-26 read_time_minutes: 14 description: "Cold Outbound: 6 Emails, 3 Frameworks, the One That Got 34% Reply Rate" domain: steepworks type: article updated: 2026-03-26

Cold Outbound: 6 Emails, 3 Frameworks, the One That Got 34% Reply Rate

Why I Stopped Reading AI Cold Email Advice

The AI cold email landscape has a credibility problem. Every "best cold email template" article is written by a vendor selling the tool that generated it. The frameworks are real. The benchmarks are real -- Instantly's 2026 report puts the average reply rate at 3.43%, with elite campaigns clearing 10%. But the articles attached to those benchmarks never show you the actual emails that produced the numbers.

Don't get me wrong -- I'm not dismissing the frameworks themselves. AIDA, PAS, PVP all work. The problem is the vendor listicle trap: frameworks presented without comparative testing. "Use AIDA!" appears on the same page as "Use PAS!" and nobody tells you which one wins, or when, or why. It's a menu with no reviews.

And most AI cold email content treats the AI output as send-ready. It isn't. Not even close. The gap between "AI-drafted" and "reply-worthy" is where the real operator work lives -- and that's the part nobody writes about, because writing about it means admitting the tool alone doesn't get you there.

So here's what I'm sharing instead: a controlled test. Six email variations across three frameworks -- PVP, AIDA, PAS -- sent to the same ICP, in the same time window, through the same sending infrastructure. With actual reply rates attached to each variation.

The punchline up front: one framework hit 34% reply rate. That's 10x the industry average. But the reason it won wasn't the framework itself. It was the specificity that the framework forced.

The Test Setup -- Same ICP, Same Infrastructure, Different Frameworks

Before the results mean anything, the test design matters. Most cold email "case studies" compare apples to oranges -- different audiences, different products, different time periods. I designed this test to isolate one variable: the framework.

The ICP. Technical decision-makers -- VP and Director-level -- at mid-market companies in a regulated industry. All sourced from the same enrichment workflow, scored through the same pipeline. For how I built the targeting, see our ICP development process.

The infrastructure. Clay for enrichment and signal detection. A dedicated sending platform for delivery. Domain warming and inbox rotation handled before the test began. Deliverability is the foundation everything else sits on -- if your emails don't land in the primary inbox, no framework saves you. For this test, deliverability wasn't a variable. It was controlled.

The variable. Six email variations, two per framework (PVP, AIDA, PAS). Each variation was AI-drafted using Claude, then edited by hand. The degree of editing varied -- and that turned out to be the most important finding of the entire test.

Sample size. Each variation went to approximately 40-60 prospects. A micro-segmented campaign, not a volume play. That's intentional. Micro-segmented lists under 50 recipients actually outperform 1,000+ blasts by 2.76x, so the focused sample was a feature, not a limitation. I want to be clear: this is one campaign's field data, not a statistically rigorous study with control groups and p-values. The patterns are real. Your mileage will vary.

What I measured. Open rate, reply rate, positive reply rate (excluding "unsubscribe" and "not interested"), and meetings booked.

Framework 1 -- AIDA (Attention-Interest-Desire-Action)

What AIDA Looks Like in Cold Email

AIDA is the oldest framework in the bunch -- it dates back to 1898. In cold email, it translates to four moves:

- Attention: A subject line or opening line that earns the open.

- Interest: A sentence connecting to a problem the prospect recognizes.

- Desire: A bridge to how things could be different -- your value, implied or stated.

- Action: A specific, low-friction CTA.

I tested two variations:

- AIDA-A: Classic structure. Hook, industry-level problem statement, "companies like yours are solving this by..." bridge, meeting ask.

- AIDA-B: Compressed. Punchy opening stat, one-sentence problem, one-sentence proof, soft CTA ("worth a conversation?").

The Results

| Metric | AIDA-A | AIDA-B |

|---|---|---|

| Open rate | ~55% | ~62% |

| Reply rate | 8% | 12% |

| Positive reply rate | 4% | 7% |

The Lesson -- Compression Beats Expansion

AIDA-B outperformed AIDA-A by 50%. The only difference was length. The shorter version forced every sentence to earn its place. Best-performing cold emails run under 80 words on first touch -- AIDA-B was 67 words. AIDA-A was 112. Those 45 extra words didn't add information. They added friction.

But the ceiling was low. Both AIDA variations felt educational rather than personal. They explained a problem the prospect already knew about. The "Interest" and "Desire" stages added structure without adding specificity. Prospects replied when they felt seen, and AIDA doesn't naturally force that level of personalization.

When to use AIDA: High-volume outbound where you have limited research time per prospect. AIDA-B in compressed form is a solid baseline that will beat the 3.43% industry average without requiring heavy per-email editing. Think of it as the floor -- reliable, but not remarkable.

Framework 2 -- PAS (Problem-Agitate-Solve)

What PAS Looks Like in Cold Email

PAS is the emotional lever. It works by naming a pain, twisting the knife, then offering relief:

- Problem: A specific pain the prospect has.

- Agitate: The consequences of ignoring that pain -- cost, risk, competitive disadvantage.

- Solve: Your solution, positioned as the relief.

Gong.io reportedly improved cold email reply rates from 6% to 14% using the PAS framework. That tracks with what I saw. PAS outperformed AIDA. But it didn't win.

I tested two variations:

- PAS-A: Industry-level problem, agitation with a market trend ("competitors are already..."), solution positioned as a meeting CTA.

- PAS-B: Prospect-specific problem (referenced a signal from their company), agitation with a concrete cost estimate, solution anchored by a brief proof point.

The Results

| Metric | PAS-A | PAS-B |

|---|---|---|

| Open rate | ~58% | ~60% |

| Reply rate | 11% | 19% |

| Positive reply rate | 5% | 12% |

The Lesson -- Generic Fear Doesn't Convert

PAS-A's "your competitors are already doing X" agitation triggered skepticism, not urgency. Experienced buyers have pattern-matched that language as spam. Every cold email that says "your competitors are adopting AI" sounds identical. It's the cold email equivalent of "Dear Sir/Madam."

PAS-B's signal-driven agitation -- referencing something real about the prospect's company, with a concrete cost implication -- felt earned rather than manufactured. The prospect could verify the signal. That verification step is what turns agitation from manipulation into relevance.

The danger with PAS: get the "Agitate" step wrong and you sound manipulative. Get it right and you're the most compelling email in their inbox. PAS has the widest variance of the three frameworks. The gap between PAS-A (11%) and PAS-B (19%) was almost 2x -- same framework, wildly different results.

When to use PAS: When you have strong signal data (job postings, funding events, product launches) but limited case studies. PAS doesn't need proof points the way PVP does. It needs a credible problem and a concrete cost of inaction. If your signal research is strong but your proof library is thin, PAS-B is your best bet.

Framework 3 -- PVP (Problem-Value-Proof) -- The Winner

What PVP Looks Like in Cold Email

PVP is the framework I use most in consulting work. Jordan Crawford popularized this methodology for outbound, and for good reason. It's structurally simple but demanding in execution:

- Problem: Name a specific, validated pain -- not a category problem, but something you've confirmed this prospect experiences.

- Value: State the outcome, not the feature. What changes for them?

- Proof: Provide a concrete proof point -- a metric, a comparable company result, a credential that earns trust.

I tested two variations:

- PVP-A: Prospect-specific problem (signal-driven), outcome statement, general proof point ("we've helped companies like yours...").

- PVP-B: Prospect-specific problem (same as PVP-A), outcome statement (same), prospect-specific proof point (referenced a comparable company in their vertical, with a named metric).

The Results

| Metric | PVP-A | PVP-B |

|---|---|---|

| Open rate | ~61% | ~64% |

| Reply rate | 22% | 34% |

| Positive reply rate | 14% | 24% |

PVP-B was the winner. 34% reply rate. 24% positive reply rate. In a world where 3.43% is average and 10% is elite, that outperformed the elite benchmark by over 3x. (done-for-you implementation)

Downstream conversion: Of PVP-B's positive replies, approximately 60% converted to meetings. On a micro-segmented campaign of this size, that translated to real pipeline. But the sample is too small to call it a benchmark. Directional, not definitive. (See also: prospect research agent)

What the Winning Email Looked Like (Redacted)

Here's the PVP-B structure, redacted to protect the engagement: (See also: product messaging test)

Subject: [SIGNAL REFERENCE] -- [SPECIFIC OUTCOME] ([pricing tiers](/pricing))

Hi [FIRST NAME],

[1-SENTENCE PROBLEM: references a specific signal from their

company -- a job posting, a product launch, a regulatory filing]

[1-SENTENCE VALUE: states the outcome in their terms,

not our features]

[2-SENTENCE PROOF: names a comparable company in their vertical

by category ("a [INDUSTRY] company your size"), cites a specific

metric, and connects it to their situation]

Worth a 15-minute conversation?

[SIGNATURE]

Total: 62 words. Four sentences and a CTA. Every sentence is doing one job.

Why It Won -- Specificity Plus Proof

The gap between PVP-A (22%) and PVP-B (34%) is the entire lesson of this article. Same framework. Same problem statement. Same value proposition. The only difference: the proof point.

PVP-A's proof was generic: "We've helped similar companies achieve [outcome]." True. Vague. Sounds like every other cold email in the inbox.

PVP-B's proof was specific: named a comparable company by category, cited a specific metric, and connected it to the prospect's situation. The prospect could see themselves in the proof point. That's the difference between "this is a mass email" and "this person actually understands my business."

I've built our cold email writing workflow around this principle -- the AI handles research synthesis and framework application, but the proof point matching is always the human step. It's the part you can't automate away yet.

When to use PVP: When you have strong case studies, can invest 20-30 minutes per email in editing, and are running a focused campaign under 100 prospects. PVP-B doesn't scale to high volume -- the editing tax is too high. But for high-value prospects where one meeting justifies the time investment, there is no better framework.

The Results Table -- All 6 Emails Side by Side

Here's the consolidated view:

| Variation | Framework | Reply Rate | Positive Reply Rate | Editing Time | Key Variable |

|---|---|---|---|---|---|

| AIDA-A | AIDA | 8% | 4% | ~10 min | Baseline -- classic structure |

| AIDA-B | AIDA | 12% | 7% | ~10 min | Compressed to 67 words |

| PAS-A | PAS | 11% | 5% | ~15 min | Generic agitation |

| PAS-B | PAS | 19% | 12% | ~20 min | Signal-driven agitation |

| PVP-A | PVP | 22% | 14% | ~15 min | Generic proof point |

| PVP-B | PVP | 34% | 24% | ~25-30 min | Prospect-specific proof point |

The pattern is hard to miss. Performance correlated with two things: specificity to the individual prospect and editing time invested. Those two variables aren't independent -- the editing time was spent adding specificity. The framework provided the structure. The operator provided the specificity.

Benchmark context: The 2026 industry average is 3.43%. Even AIDA-A -- our worst performer at 8% -- beat the average by more than 2x. The micro-segmented ICP and signal-driven targeting lifted the floor. The framework and specificity lifted the ceiling.

Framework Selection Guide

Choose your framework based on your constraints, not the "best" label:

| Your Situation | Recommended Framework | Expected Lift Over Average |

|---|---|---|

| High volume (50+ emails/day), limited research time | AIDA-B (compressed) | 2-3x |

| Strong signal data, limited case studies | PAS-B (signal-driven) | 4-5x |

| Strong case studies, low volume (<100 prospects), high-value targets | PVP-B (specific proof) | 8-10x |

| New outbound program, testing what works | Start with PAS-B, graduate to PVP-B as you build case studies | 4-10x |

This is not a hierarchy. PVP-B's 34% doesn't help you if you can't invest 25 minutes per email. Match the framework to your team's capacity. The best framework is the one your team can actually execute consistently.

Why AI Cold Email Still Needs Heavy Editing

This is the section most AI cold email articles skip. The vendor narrative is clean: "Use our AI to write personalized emails at scale." The operator reality is messier. AI gets you 60-70% of the way there, and the last 30-40% -- the part that actually drives replies -- requires human judgment.

What AI Does Well

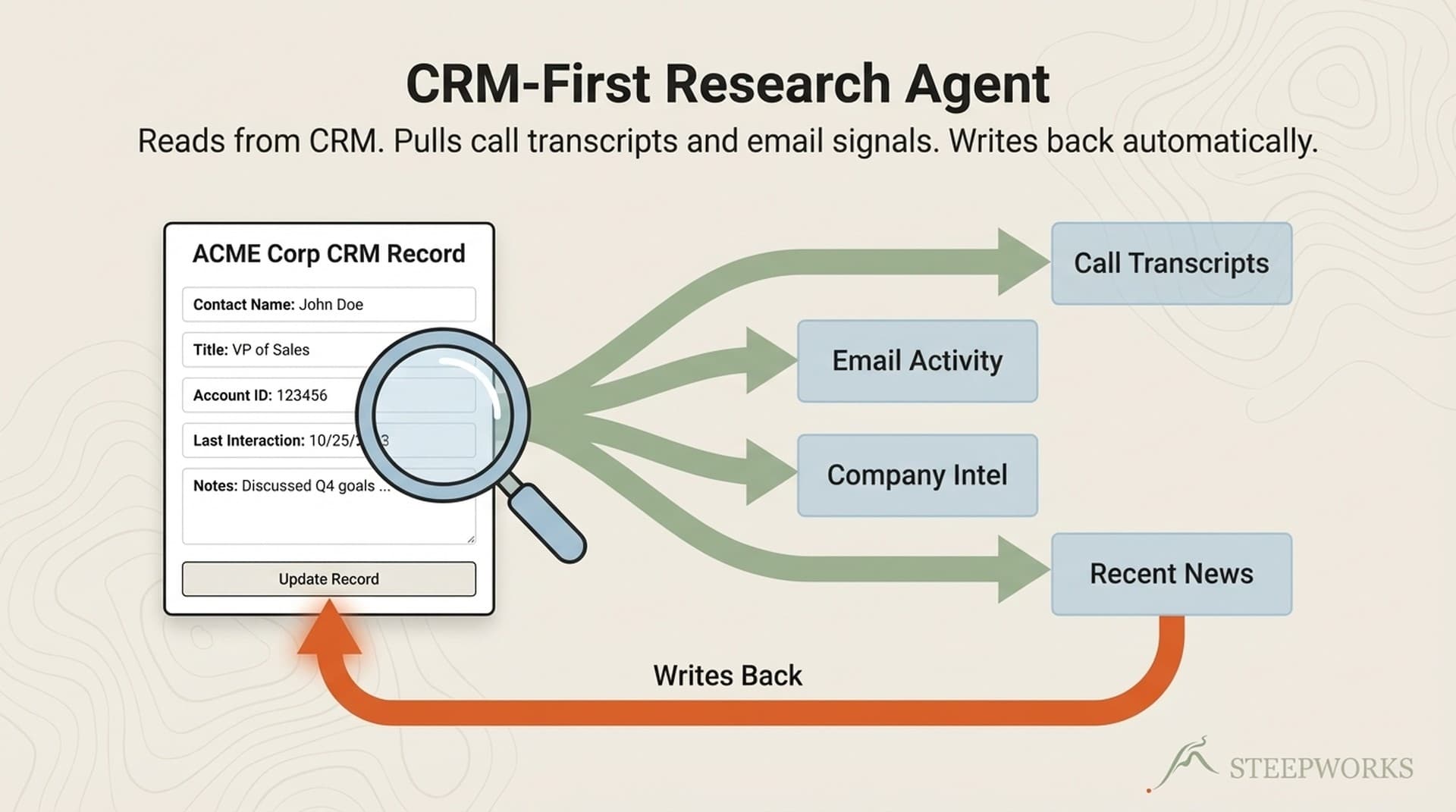

Research synthesis. AI can digest a prospect's LinkedIn, company website, recent news, and job postings into a coherent problem statement. This used to take 15-20 minutes per prospect. Now it takes 2 minutes. That compression alone changes the economics of personalized outbound.

Framework application. Given a framework -- PVP, AIDA, PAS -- AI applies it structurally. The bones come out right. Subject line hooks, problem framing, CTA placement. The architecture is solid.

Variation generation. Need 6 variations of the same pitch? AI produces them in minutes while maintaining framework integrity. That's what made this test logistically possible -- generating the base versions took an hour, not a week.

Tone calibration. "Write this at a VP level, not a sales pitch." AI handles tone shifts well. It can move from formal to conversational, from educational to direct. The voice adjustments that used to require multiple drafts happen on the first pass.

What AI Gets Wrong

Proof point selection. AI doesn't know which case study matters to this specific prospect. It picks the most "impressive" metric, not the most relevant one. A 340% improvement in pipeline velocity is meaningless if the prospect's problem is lead quality, not speed. The matching of proof to prospect is operator judgment that AI consistently misses.

Agitation authenticity. AI-generated agitation sounds manufactured. "If you don't address this challenge, your competitors will gain a significant advantage..." Every AI writes some version of this line. Experienced buyers have pattern-matched it as generic copy. Real agitation references something specific and verifiable -- a recent competitor move, a regulatory change, a public earnings miss.

Signal interpretation. AI can surface that a company posted three engineering roles last month. It can't reliably judge what that means for this prospect's buying readiness. Are they scaling and might need your product? Or are they backfilling churn and have no budget for new tools? That interpretation is operator judgment, and getting it wrong turns personalization into a liability.

The uncanny valley of personalization. AI personalization that references the prospect's company but gets the implication wrong is worse than no personalization at all. "Bad personalization doesn't scale" -- it just scales embarrassment. I've seen AI-generated emails that name-drop a prospect's product launch and then pitch a solution to a problem the launch actually solved. That doesn't read as personalized. It reads as careless.

The Editing Tax -- And Why It's Worth Paying

Every email in this test started as an AI draft. The editing ranged from light (AIDA-A: 10 minutes, mostly trimming) to heavy (PVP-B: 25-30 minutes, rewriting the proof point section entirely).

The correlation was clear: more editing produced higher reply rates. Not because editing is magic, but because the parts AI can't do -- matching proof points to prospects, interpreting signals, judging tone in context -- are the parts that drive replies.

The practical implication: if you're using AI to write cold email at scale, budget for editing time. The AI compresses the research phase from hours to minutes. That time savings should flow into editing, not into sending more unedited emails. For the tool stack behind this workflow -- Clay for enrichment, Claude for drafting, and the integration layer that connects them -- see our AI GTM stack breakdown.

The companies claiming "fully automated AI cold email" are either accepting mediocre reply rates or misrepresenting their process. There is no shortcut past the editing tax if you want results above the baseline.

What I'm Testing Next

Two open questions from this test:

Does proof point specificity lift all frameworks equally? The biggest finding -- that a prospect-specific proof point nearly doubled PVP's reply rate -- was only tested within PVP. I'm running the same test on PAS next: same problem, same agitation, generic proof versus specific proof. My hypothesis is that specificity is framework-agnostic, but I want the data before claiming it. The sample size requirements for confident A/B testing mean this next round needs more volume -- I'm targeting 100+ per variation.

Automating the proof point match. The editing tax on PVP-B (25-30 minutes per email) doesn't scale. The next iteration is a proof-point matching system -- a database of case studies tagged by industry, company size, and pain point, with AI doing the initial match and a human doing the final selection. That system would make PVP-B's 34% reply rate available at PAS-level editing effort. I'll write about it when I've built it.

The Takeaway -- Framework Is Necessary, Specificity Is the Multiplier

Three things this test confirmed.

Framework matters, but less than you think. All three frameworks -- AIDA, PAS, PVP -- beat the industry average when applied to a well-segmented ICP with signal-driven targeting. The framework provides structure. It doesn't provide results on its own. Choosing between AIDA and PAS is less important than choosing the right ICP and investing in the research that feeds the draft.

Specificity is the multiplier. Within every framework, the more specific version outperformed the generic one. PAS-B beat PAS-A. PVP-B beat PVP-A. AIDA-B's compression advantage was a form of specificity too -- cutting the generic sentences forced every remaining word to be relevant. The pattern is consistent: prospects reply when they feel seen, not when they feel marketed to.

AI cold email is a drafting tool, not a sending tool. AI compresses research and generates structure. It handles the 60-70% that used to consume most of the time. But the proof points, the signal interpretation, the editing that turns a draft into a conversation starter -- that's the operator's job. The 34% reply rate came from AI plus human editing, not AI alone. Anyone selling you "fully automated personalized outbound" is selling you AIDA-A performance at PVP-B prices.

If you're building an outbound program and choosing a framework, start with the selection guide above. Match the framework to your team's constraints. But wherever you land, invest the editing time. The framework is the container. The specificity you pour into it is what earns the reply.

Victor Sowers builds AI-native GTM systems at STEEPWORKS. 15 years scaling B2B SaaS, two exits, and 2.5 years of production AI-in-GTM.